Chapter II

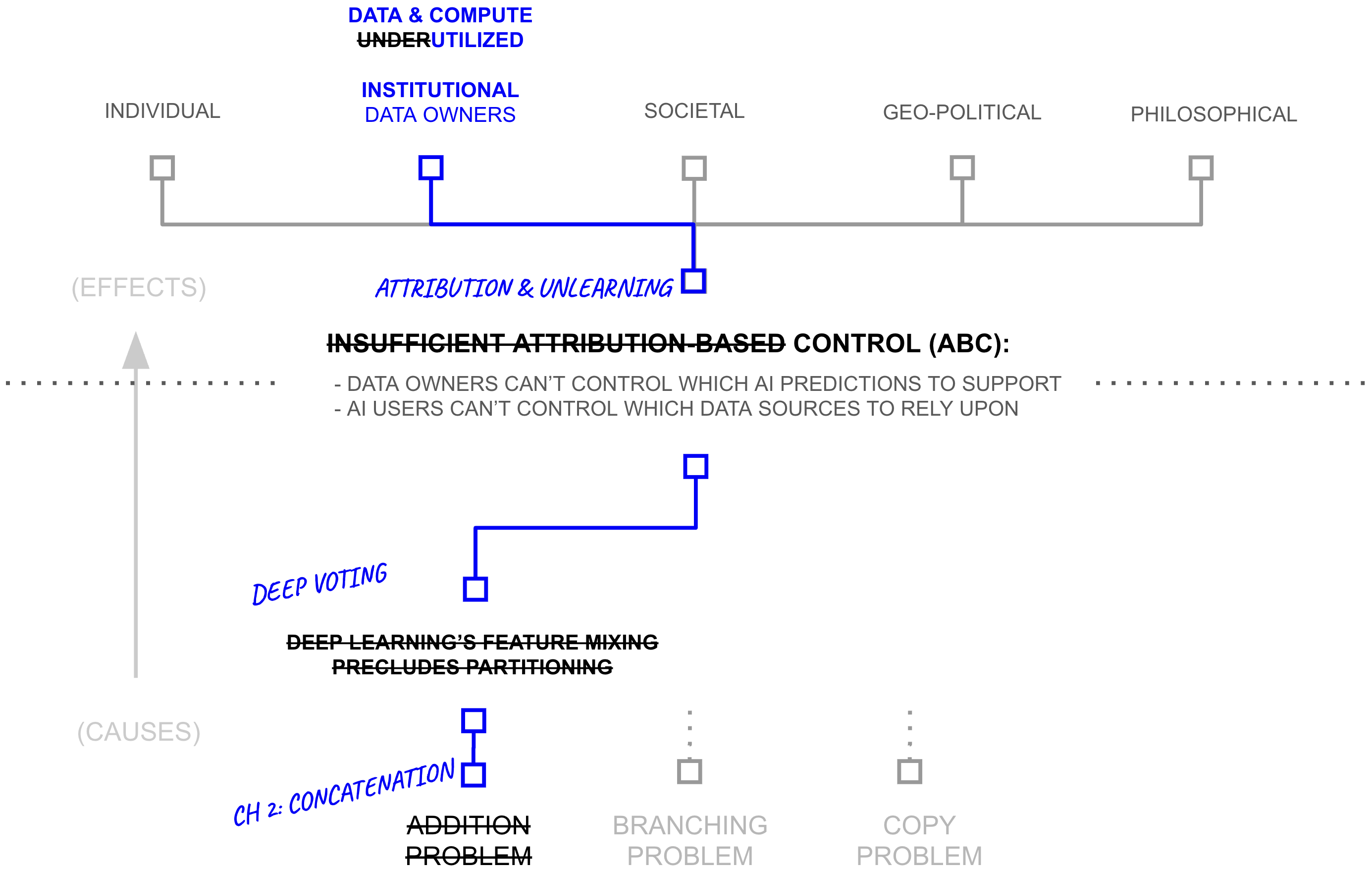

From Deep Learning to Deep Voting

Chapter Summary

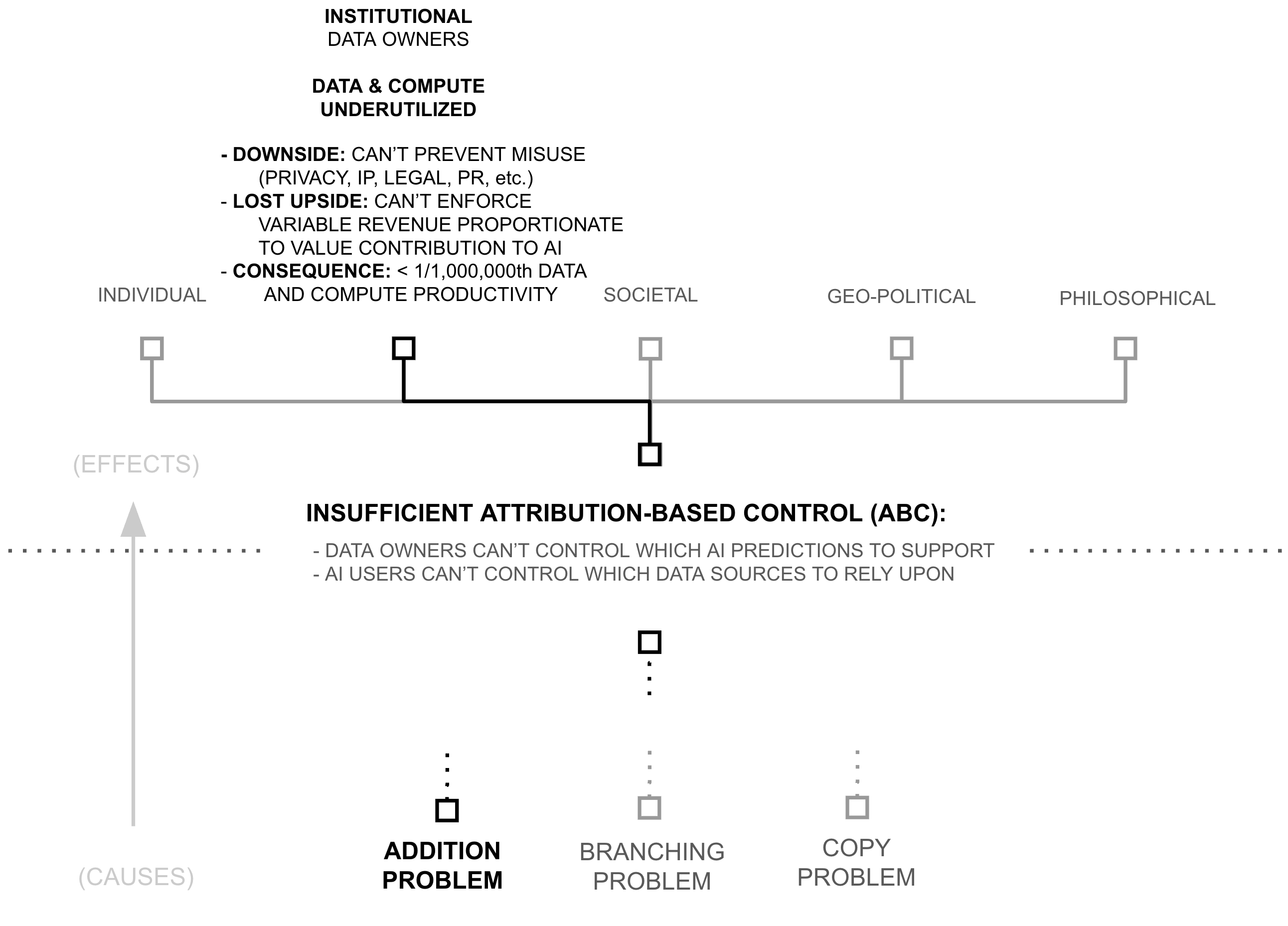

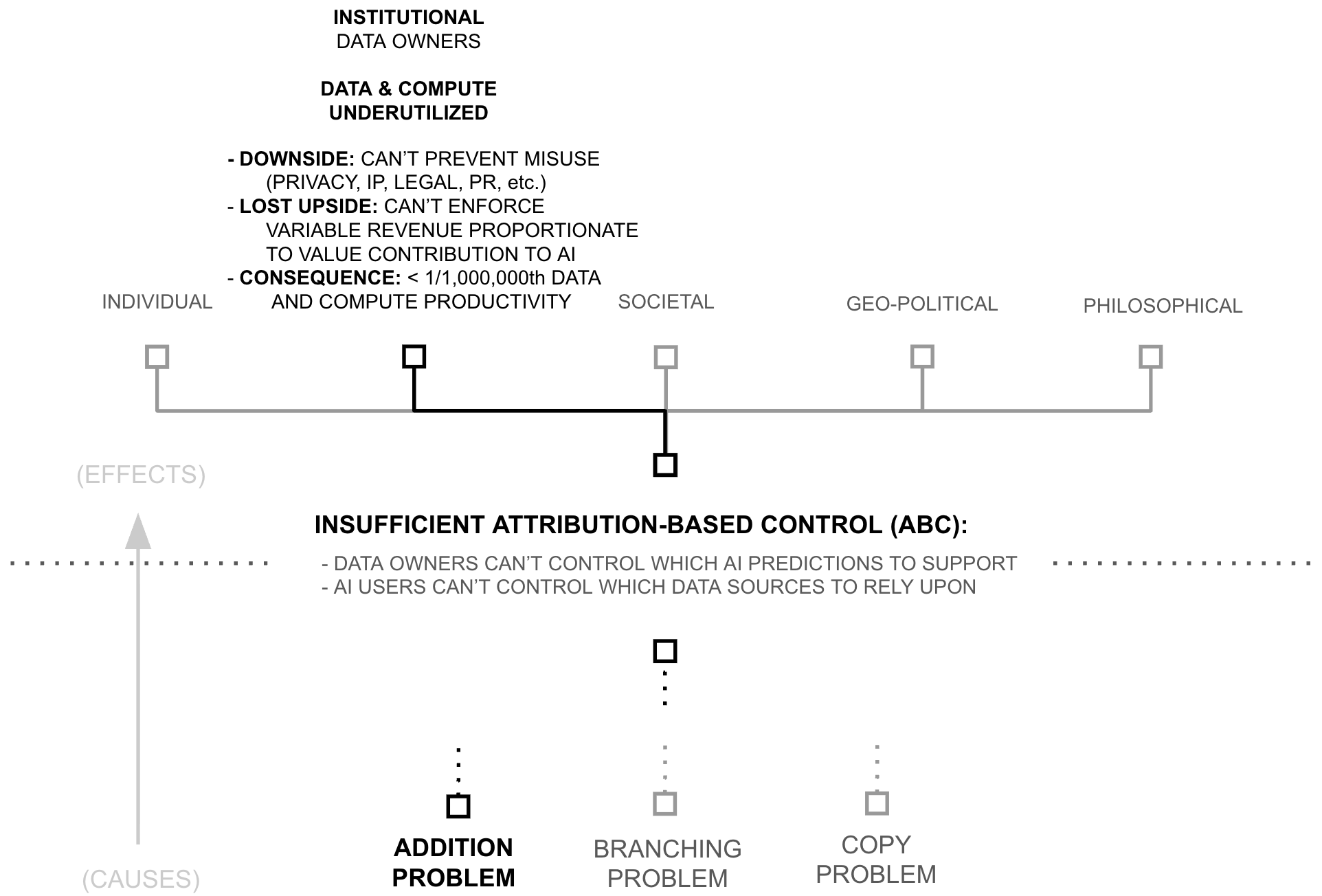

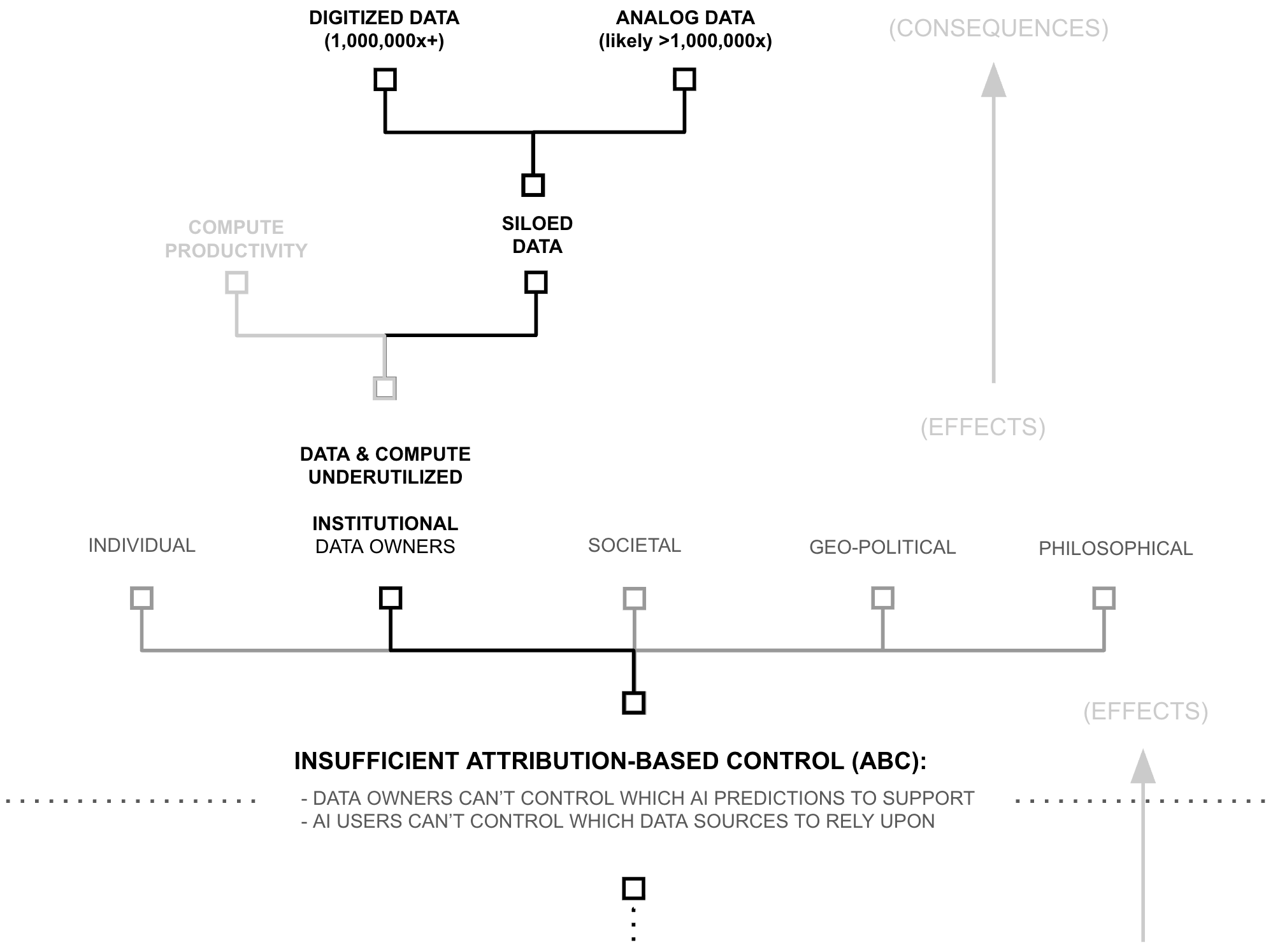

Estimates below suggest that models are trained using less than 1/1,000,000th of the world’s data and AI compute productivity. Consequently, following AI’s scaling laws (Kaplan et al., 2020; Hoffmann et al., 2022), AI models possess capabilities which are insignificant compared to what existing data, compute, and algorithms could create.

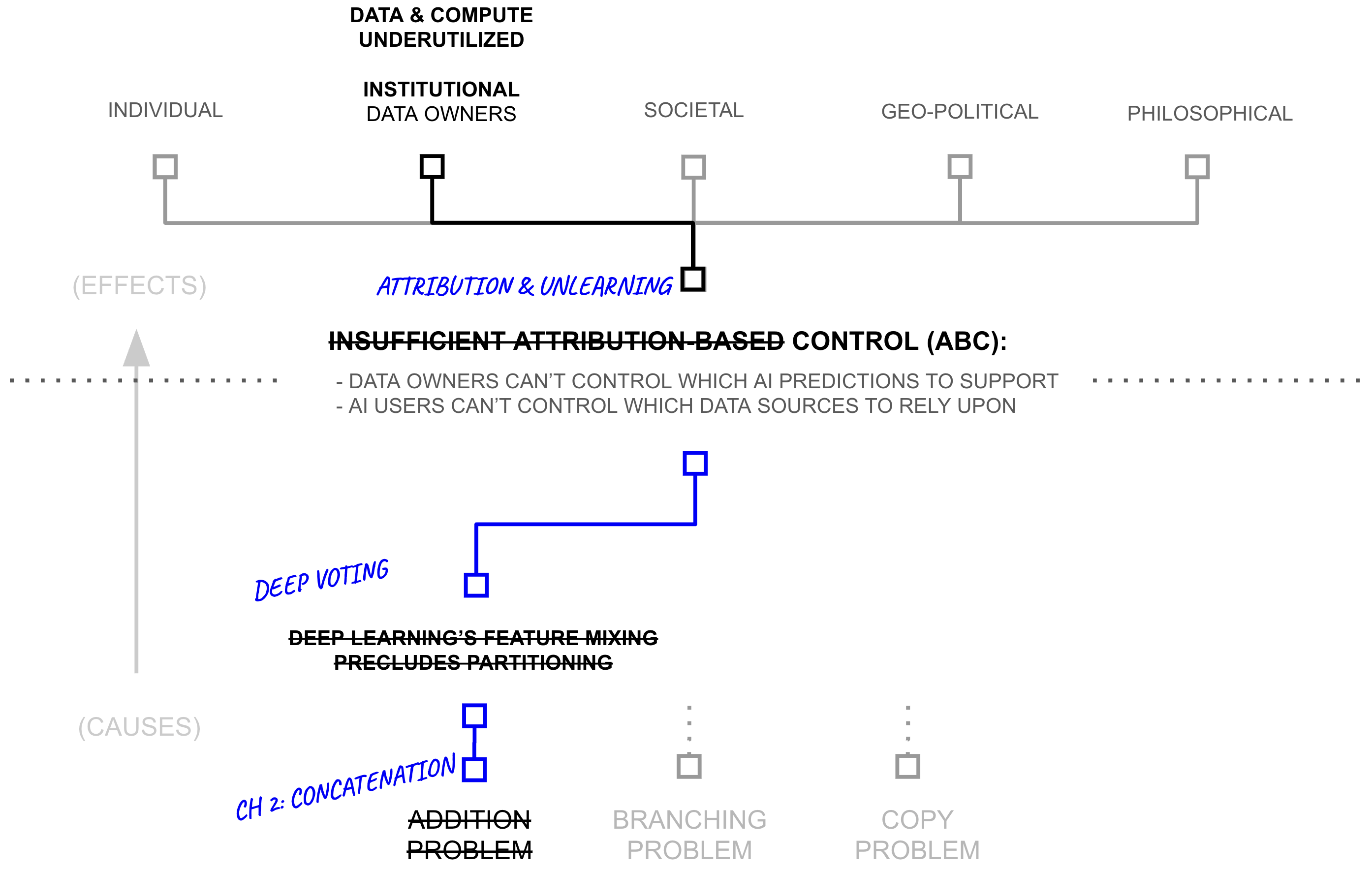

Yet, if AI models (and their capabilities) are the lifeblood of the AI industry, why are data and compute so underutilized? Why is AI capability so constrained? This chapter unpacks the cause of such a drastic resource under-utilization. It begins by linking resource utilization to attribution-based control (ABC). It then breaks attribution-based control into problems with attribution and control, which are themselves underpinned by deep learning’s core philosophy of mixing dense features. This mixing is only problematic because of a specific technical choice: the use of addition to update model weights, which erases provenance information during gradient descent.

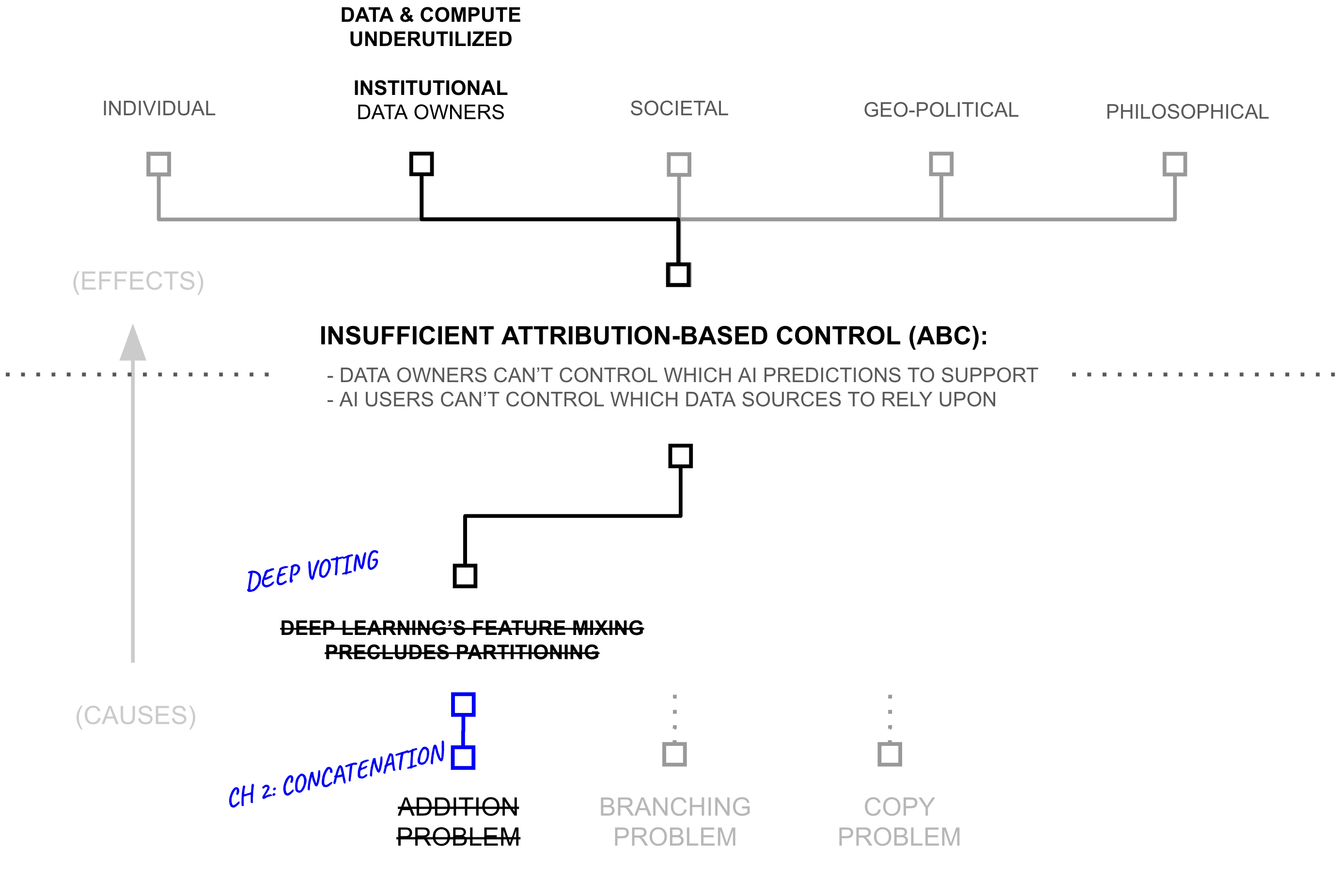

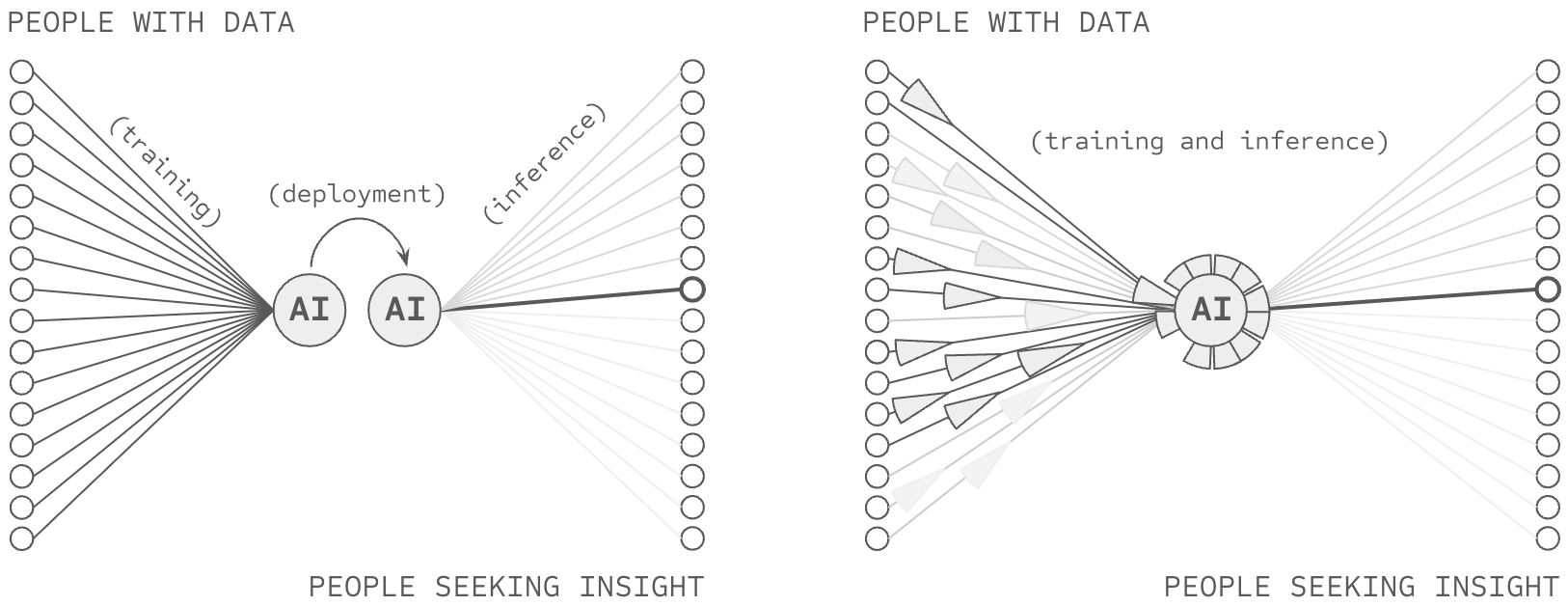

The chapter then explores alternatives to addition during the training process, revealing a fundamental trade off between three factors: AI capability (driven by unrestricted feature learning), attribution (tracking where features came from), and computational complexity (tracking the path of feature mixing). It proposes a key innovation, a re-purposing of differential privacy for attribution: differential attribution, using the natural boundaries of training documents to identify which concepts must mix freely and vice versa, thereby pushing this Pareto frontier by providing a data-driven approach to balance addition and concatenation.

Building on this insight, the chapter develops a specific form of concatenation to replace addition in key sections of the deep learning training process. This transformation—from deep learning to deep voting—cascades upward through the aformentioned hierarchy of problems, reducing the need for dense feature mixing across data sources, enabling attribution-based control, and unlocking a viable path towards another 6+ orders of magnitude of training data and compute productivity. Taken together, the chapter reveals how a seemingly technical choice (the use of addition) creates far-reaching consequences for AI systems, and how careful, data-driven use of concatenation may dramatically expand AI’s access to computational and data resources.

The Symptom: Data/Compute Underutilization

As of NeurIPS 2024, leading AI researchers have reported that available compute and data reserves are approaching saturation, creating constraints on both computational resources and pre-training scale (Robison 2024; Strati et al., 2024). However, this assessment overlooks approximately six orders of magnitude of underutilized compute productivity and siloed data. Rather than absolute scarcity, the industry faces structural problems of data and compute access and productivity.

6+ OOM: Underutilized Training Compute Productivity

The AI industry’s computational requirements have driven significant economic and geopolitical consequences, including NVIDIA’s rise to become the world’s most valuable company, U.S. export restrictions on AI chips to China, and intense competition for latest-generation hardware among startups, enterprises, and major technology firms (Kaye 2025; Kachwala and Bajwa 2025; Howley 2023). However, recent evidence suggests that current AI training and inference processes utilize less than 0.0002% of available compute productivity, indicating that perceived compute scarcity may reflect inefficiency rather than absolute resource limits. To evaluate this claim, this section estimates computational waste in two key activities: inference (forward propagation) and learning (backpropagation and gradient descent).

2-3 OOM: Inefficient AI inference

Due to its role in commercial deployments, analysts estimate that AI firms spend billions of dollars annually on inference (You 2025). However, while these costs might appear to reflect fundamental requirements for achieving high performance, a growing body of empirical work indicates substantial inefficiency in current inference practices.

A Library Analogy

Consider a library. When someone asks a librarian about the rules of chess, the librarian doesn’t subsequently read every book in the library to find the answer. Instead, they use the catalog system to find a relevant bookshelf, the titles of books on that shelf to find the relevant book, and the table of contents of that book to find the relevant section. This practice stands in stark contrast to how AI systems process information. To make an AI prediction with a model like GPT-3, AI users forward propagate through the entire model and all of its knowledge (i.e., read every book in the library). And in the case of large language models, they don’t just do this once per answer, they do this for every token they predict. This is analogous to a librarian reading every book in the library every time they utter a word. Given how implausible it is that any prediction requires the entirety of an AI models knowledge, AI’s full, dense inference is a staggering inefficiency.

AI models store information within their weights. General-purpose models (e.g., Gemini, ChatGPT, Claude, Llama) encode substantial portions of their training corpora (often representing significant fractions of publicly available internet data) in these parameters. However, when models like GPT-3 generate predictions, they forward propagate through every non-embedding parameter in the network, regardless of query relevance. This constitutes a form of exhaustive computation wherein all stored knowledge is activated for each inference, analogous to searching an entire corpus rather than querying relevant subsets.

From an information-theoretic perspective, this practice is inefficient; the relevant question concerns the magnitude of this inefficiency. A comprehensive answer would require empirically measuring the maximum percentage of model weights that can be excluded from inference without degrading accuracy. While such systematic measurement remains incomplete, existing work provides lower bounds on potential efficiency gains.

DeepMind’s RETRO achieves comparable performance to GPT-3 while using 1/25th of the parameters through retrieval from a large-scale vector database (Borgeaud et al., 2022). Similarly, Meta’s ATLAS demonstrates that models can be reduced to 1/50th their original size while maintaining or exceeding baseline performance through database-augmented inference (Izacard et al., 2023).

We adopt RETRO/ATLAS-style parameter efficiency as a conservative lower bound on current compute waste, noting that these approaches have not been widely adopted in either the sparsity literature (Lederer 2024) or frontier AI deployments, nor have comparable efficiency gains been demonstrated through alternative methods (cf. the persistent redundancy problem in Mixture of Experts models (Dai et al., 2024)). These results suggest that at least 96-98% of parameters activated during dense inference are unnecessary for individual queries.

This estimate is likely conservative, as it implies that 2-4% of a model’s knowledge base is relevant to any individual query. 1 However, parameter overuse during forward propagation represents only one source of computational waste. A second form of inefficiency arises in how models store and access information.

A Library Analogy

Consider a library once again. When someone asks a librarian about the rules of chess, and the librarian goes to fetch a particular book, the librarian doesn’t bring back every copy of the book in the library. And, as unintuitive as this might seem, the librarian also doesn’t bring back empty books from random assortments of shelves. Instead, they use the catalog system to find a relevant bookshelf, the titles of books on that shelf to find the relevant book, and then they select a single book for the library’s customer.

This practice stands in stark contrast to how AI systems process information. To make an AI prediction within a model like GPT-3, AI users don’t merely forward propagate through the entire model and all of its knowledge (i.e., read every book in the library), AI users must forward propagate through some mixture of multiple copies of the same information (i.e. multiple copies of the same book) as well as empty vector space (i.e. empty books) in order to create an output.

1 While this fraction seems implausibly large for most queries, systematic measurement of query-specific parameter relevance remains limited in the literature. We therefore retain this conservative 2-4% estimate.

Recent work demonstrates that current architectures contain redundant and underutilized parameters. Guo et al. achieve 5-10x reduction in parameter count through lossless compression while maintaining accuracy: ”Notably, we distill CIFAR-10 and CIFAR-100 to 1/5 and Tiny ImageNet to 1/10 of their original sizes without any performance loss on ConvNet, offering the first lossless method of dataset distillation” (Guo et al., 2023). This compression has been demonstrated across multiple standard architectures, as shown in Table 2.1.

| Dataset | CIFAR-10 | CIFAR-100 | Tiny ImageNet | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| IPC | 1 0.02 |

10 0.2 |

50 1 |

500 10 |

1000 20 |

1 0.2 |

10 2 |

50 10 |

100 20 |

1 0.2 |

10 2 |

50 10 |

| Random | 15.4±0.3 | 31.0±0.5 | 50.6±0.3 | 73.2±0.3 | 78.4±0.2 | 4.2±0.3 | 14.6±0.5 | 33.4±0.4 | 42.8±0.3 | 1.4±0.1 | 5.0±0.2 | 15.0±0.4 |

| DC | 28.3±0.5 | 44.9±0.5 | 53.9±0.5 | 72.1±0.4 | 76.6±0.3 | 12.8±0.3 | 25.2±0.3 | - | - | - | - | - |

| DM | 26.0±0.8 | 48.9±0.6 | 63.0±0.4 | 75.1±0.3 | 78.8±0.1 | 11.4±0.3 | 29.7±0.3 | 43.6±0.4 | - | 3.9±0.2 | 12.9±0.4 | 24.1±0.3 |

| DSA | 28.8±0.7 | 52.1±0.5 | 60.6±0.5 | 73.6±0.3 | 78.7±0.3 | 13.9±0.3 | 32.3±0.3 | 42.8±0.4 | - | - | - | - |

| CAFE | 30.3±1.1 | 46.3±0.6 | 55.5±0.6 | - | - | 12.9±0.3 | 27.8±0.3 | 37.9±0.3 | - | - | - | - |

| KIP1 | 49.9±0.2 | 62.7±0.3 | 68.6±0.2 | - | - | 15.7±0.2 | 28.3±0.1 | 37.9±0.3 | - | - | - | - |

| FRePo1 | 46.8±0.7 | 65.5±0.4 | 71.7±0.2 | - | - | 28.7±0.1 | 42.5±0.2 | 44.3±0.2 | - | 15.4±0.3 | 25.4±0.2 | - |

| RCIG1 | 53.9±1.0 | 69.1±0.4 | 73.5±0.3 | - | - | 39.3±0.4 | 44.1±0.4 | 46.7±0.3 | - | 25.6±0.3 | 29.4±0.2 | - |

| MTT2 | 46.2±0.8 | 65.4±0.7 | 71.6±0.2 |

|

|

24.3±0.3 | 39.7±0.4 | 47.7±0.2 | 49.2±0.4 | 8.8±0.3 | 23.2±0.2 | 28.0±0.3 |

| TESLA2 | 48.5±0.8 | 66.4±0.8 | 72.6±0.7 |

|

|

24.8±0.4 | 41.7±0.3 | 47.9±0.3 | 49.2±0.4 | - | - | - |

| FTD2,3 | 46.0±0.4 | 65.3±0.4 | 73.2±0.2 |

|

|

24.4±0.4 | 42.5±0.2 | 48.5±0.3 | 49.7±0.4 | 10.5±0.2 | 23.4±0.3 | 28.2±0.4 |

| DATM (Ours) | 46.9±0.5 | 66.8±0.2 | 76.1±0.3 | 83.5±0.2 | 85.5±0.4 | 27.9±0.2 | 47.2±0.4 | 55.0±0.2 | 57.5±0.2 | 17.1±0.3 | 31.1±0.3 | 39.7±0.3 |

| Full Dataset | 84.8±0.1 | 56.2±0.3 | 37.6±0.4 | |||||||||

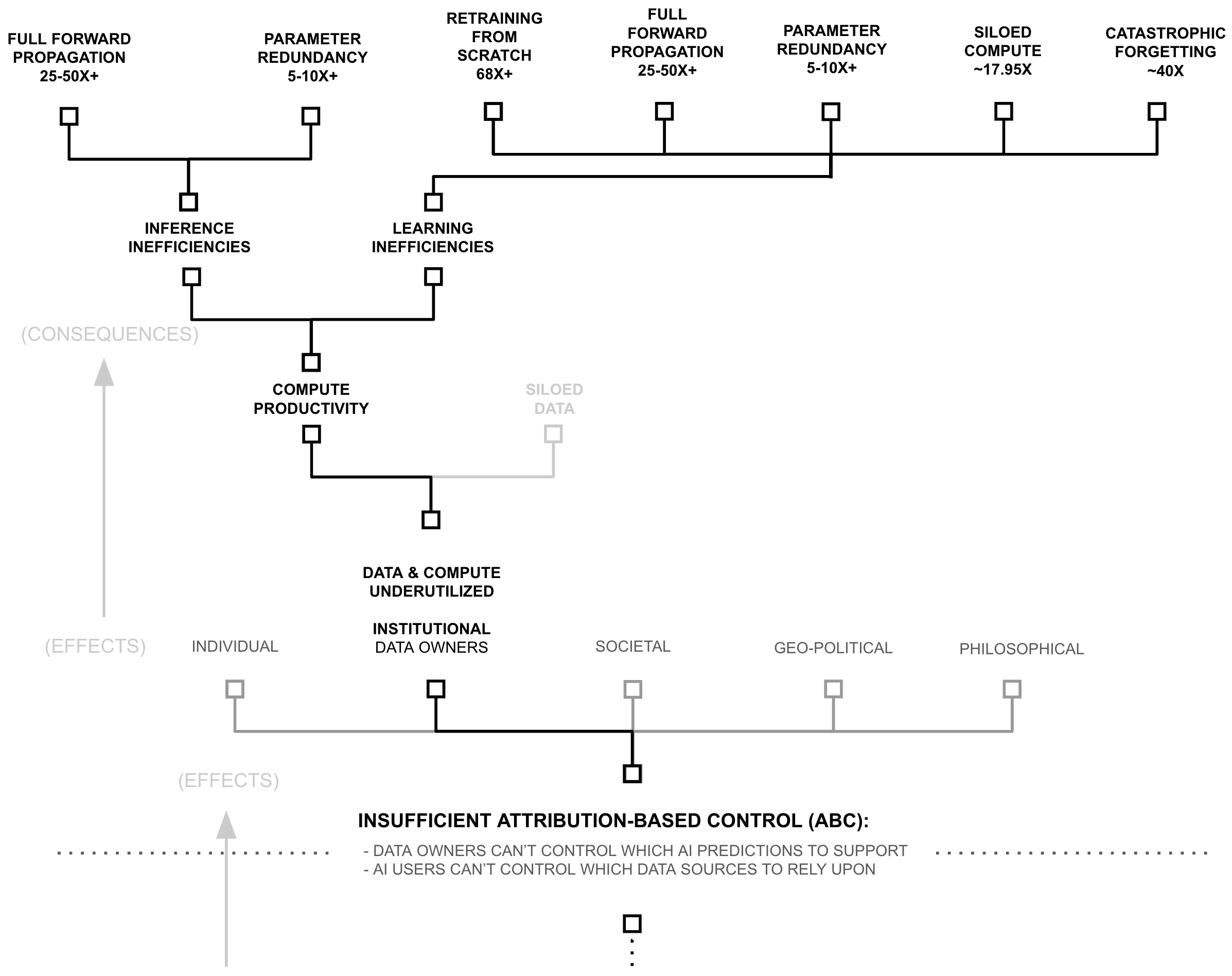

Because they account for information waste in different ways, these inefficiencies compound multiplicatively: irrelevant parameters (25-50x+) and redundant parameters (5-10x+) suggest a 125-500x+ lower-bound to inference inefficiency. And while these are estimates, both bounds come from working implementations that maintain model performance, using techniques which are not widely popular, suggesting this waste is common in frontier AI systems, and that this waste stems from architectural choices rather than fundamental limitations

6 OOM: Underutilized and Inefficient Compute in AI Learning

AI firms famously spend immense amounts of money training their AI models, a point which features heavily in their marketing (Meta AI 2024; Brown et al., 2020; Wiggers 2024). However, despite widespread hype around AI training spend, a theoretical inefficiency is backed by an increasingly large body of empirical observations, suggesting that the compute requirements for training AI models are largely misunderstood. To introduce the theory, consider an analogy.

A Library Analogy

As before, consider a library. When a library adds or removes a significant number of books to/from their collection, they don’t rebuild the entire building and re-print all of their books from scratch, they simply add/remove books, shelves, or rooms. These practices stand in stark contrast to how AI systems process information. To add or remove a significant portion of knowledge from a deep learning system, AI researchers retrain them from scratch (i.e., tear down the entire library, burn all the books, rebuild the library, and re-print all the books from scratch). Despite being a widespread, even ubiquitous practice within AI research, this practice is staggeringly inefficient. It re-characterizes the claims of compute scarcity in an entirely new light. It is like a librarian who repeatedly tears down their library, and re-prints all their books — lamenting insufficient bricks, paper, or ink.

AI models store information within their weights. To acquire this information, models are trained on large corpora at substantial computational cost (e.g., significant portions of the public internet) (Epoch AI 2025). However, when models require substantial updates (either incorporating new information or removing outdated content) current practice involves retraining from scratch (Goodfellow et al., 2016). This approach discards all previously computed parameters and repeats the entire training process, even when the majority of learned representations remain valid.

From an information-theoretic perspective, this practice is inefficient; the question concerns its magnitude. Comprehensive quantification would require detailed documentation of compute allocation within leading AI firms, information that is not publicly available. However, public disclosures and industry analysis provide sufficient data to establish lower bounds on this inefficiency.

Analysis of the largest AI firms reveals that pre-training their most capable models consumes less than 1% of quarterly compute budgets (see Table 2.2; methodology detailed in Appendices I and II). Yet these same firms continue expanding computational infrastructure to support larger models (Mehta 2024; OpenAI 2024; Sevilla and Roldan 2024), suggesting that remaining compute capacity is allocated to other training activities rather than final model production.

| Model | Organization | Lab/Cloud | Train FLOPs |

Parent Org Peak Annual FLOPs |

Model/Public Models (Lab/Cloud) |

Model/Peak Annual (%) |

Model/Peak w/100x (%) |

|---|---|---|---|---|---|---|---|

| Gemini 1.0 Ultra | Google DeepMind | Google DeepMind | $5.00 \times 10^{25}$ | $3.87 \times 10^{28}$ | 45.65 | 0.129 | 12.93 |

| Claude 3.5 Sonnet | Anthropic | Anthropic/Amazon | $4.98 \times 10^{25}$ | $2.27 \times 10^{28}$ | 69.74 | 0.220 | 21.96 |

| GPT-4o | OpenAI | Microsoft/OpenAI | $3.81 \times 10^{25}$ | $4.35 \times 10^{28}$ | 53.36 | 0.088 | 8.75 |

| Llama 3.1-405B | Meta AI | Meta AI | $3.80 \times 10^{25}$ | $5.65 \times 10^{28}$ | 66.32 | 0.067 | 6.72 |

| GPT-4 | OpenAI | Microsoft/OpenAI | $2.10 \times 10^{25}$ | $4.35 \times 10^{28}$ | 29.41 | 0.048 | 4.82 |

| Gemini 1.0 Pro | Google DeepMind | Google DeepMind | $1.83 \times 10^{25}$ | $3.87 \times 10^{28}$ | 16.71 | 0.047 | 4.73 |

| Claude 3 Opus | Anthropic | Anthropic/Amazon | $1.64 \times 10^{25}$ | $2.27 \times 10^{28}$ | 22.97 | 0.072 | 7.23 |

| Gemini 1.5 Pro | Google DeepMind | Google DeepMind | $1.58 \times 10^{25}$ | $3.87 \times 10^{28}$ | 14.43 | 0.041 | 4.09 |

| Llama 3-70B | Meta AI | Meta AI | $7.86 \times 10^{24}$ | $5.65 \times 10^{28}$ | 13.72 | 0.014 | 1.39 |

| GPT-4o mini | OpenAI | Microsoft/OpenAI | $7.36 \times 10^{24}$ | $4.35 \times 10^{28}$ | 10.31 | 0.017 | 1.69 |

| PaLM 2 | Google DeepMind | $7.34 \times 10^{24}$ | $3.87 \times 10^{28}$ | 6.70 | 0.019 | 1.90 | |

| Llama 3.3 | Meta AI | Meta AI | $6.86 \times 10^{24}$ | $5.65 \times 10^{28}$ | 11.98 | 0.012 | 1.21 |

| Amazon Nova Pro | Amazon | Anthropic/Amazon | $6.00 \times 10^{24}$ | $2.27 \times 10^{28}$ | 8.40 | 0.026 | 2.65 |

| Amazon Titan | Amazon | Anthropic/Amazon | $4.80 \times 10^{24}$ | $2.27 \times 10^{28}$ | 6.72 | 0.021 | 2.12 |

| Claude 2 | Anthropic | Anthropic/Amazon | $3.87 \times 10^{24}$ | $2.27 \times 10^{28}$ | 5.41 | 0.017 | 1.70 |

| Minerva (540B) | Google DeepMind | $2.74 \times 10^{24}$ | $3.87 \times 10^{28}$ | 2.50 | 0.007 | 0.71 | |

| GPT-3.5 (text-davinci-003) | OpenAI | Microsoft/OpenAI | $2.58 \times 10^{24}$ | $4.35 \times 10^{28}$ | 3.61 | 0.006 | 0.59 |

| U-PaLM (540B) | Google DeepMind | $2.53 \times 10^{24}$ | $3.87 \times 10^{28}$ | 2.31 | 0.007 | 0.65 | |

| PaLM (540B) | Google Research | Google DeepMind | $2.53 \times 10^{24}$ | $3.87 \times 10^{28}$ | 2.31 | 0.007 | 0.65 |

| Flan-PaLM 540B | Google DeepMind | $2.50 \times 10^{24}$ | $3.87 \times 10^{28}$ | 2.28 | 0.006 | 0.65 | |

| FLAN 137B | Google Research | Google DeepMind | $2.05 \times 10^{24}$ | $3.87 \times 10^{28}$ | 1.87 | 0.005 | 0.53 |

| Meta Movie Gen Video | Meta AI | Meta AI | $2.65 \times 10^{24}$ | $5.65 \times 10^{28}$ | 2.88 | 0.003 | 0.29 |

| Megatron-Turing NLG 530B | Microsoft,NVIDIA | Microsoft/OpenAI | $1.17 \times 10^{24}$ | $4.35 \times 10^{28}$ | 1.64 | 0.003 | 0.27 |

| Llama 2-70B | Meta AI | Meta AI | $8.10 \times 10^{23}$ | $5.65 \times 10^{28}$ | 1.41 | 0.001 | 0.14 |

The contrast between frontier models consuming a small fraction of quarterly compute budgets and ongoing infrastructure expansion suggests that leading AI firms train numerous experimental models beyond their final deployments. This interpretation aligns with widely documented practices in frontier AI laboratories. Major labs employ hundreds to thousands of researchers who routinely train models during development. Standard optimization procedures, such as hyperparameter sweeps, involve training single model architectures tens to hundreds of times to identify optimal configurations (Weights & Biases 2025).

Taken together, producing a final frontier model requires training hundreds to thousands of intermediate models during the R&D process. While hyperparameter optimization is essential to model development, current approaches necessarily involve complete retraining for each configuration. This contrasts with modular systems where components can be incrementally optimized without discarding the entire structure. Recent work on targeted model modification (e.g., LLM surgery (Veldanda et al., 2024)) suggests alternatives to full retraining, but such techniques have not been widely adopted in frontier model development, necessitating substantial computational expenditure during optimization.

Consider the magnitude of this inefficiency. If frontier labs aim to produce general-purpose models, then computational resources allocated to intermediate experimental models represent overhead that does not directly contribute to final model capability. Based on Table 2.2, approximately 98.53% of annual training budgets are allocated to models other than the final deployment (calculated as 100% − (0.22%/15%), where 15% represents the estimated fraction of total compute dedicated to training (Bratt 2025)). Under the objective of producing a generalpurpose model, this implies that the vast majority of training compute is allocated to parameters that are ultimately discarded during the optimization process.

This annual estimate may understate total inefficiency, as it assumes frontier labs must train at least one model from scratch annually. If model parameters could be efficiently reused across generations (as RETRO/ATLAS demonstrate through their retrieval databases, where up to 98% of knowledge can be transferred between model versions (Borgeaud et al., 2022; Izacard et al., 2023)) the efficiency gap would be larger. However, retraining overhead represents only one source of computational waste. A second source arises from how models process information during training. To observe the theory behind this phenomena, consider the following analogy.

A Library Analogy

Once again, consider a library. When someone submits a new book to a library, the librarian doesn’t subsequently read every book in the library to figure out where to store it on the shelves. Instead, they use the catalog system to find a relevant bookshelf, the decimal system to locate the right placement on the shelf, and (perhaps) the title of the book to find its alphabetical placement on that shelf.

This practice stands in stark contrast to how AI systems are trained. To add a single training example into a model like GPT-3, AI users forward propagate through the entire model and all of its knowledge (i.e., read every book in the library). And to train an entire model like GPT-3 on modern training corpuses (i.e. trillions of tokens), it repeats this process trillions of times. It is like a librarian who repeatedly reads every book to figure out where a book should be placed, and then complains about not having enough librarian assistants (i.e. GPU compute threads) to accomplish the task.

The question concerns the magnitude of this inefficiency. The RETRO and ATLAS results previously discussed demonstrate that models can achieve comparable performance while being 25-50x smaller in parameter count. This parameter reduction translates directly to reduced training costs: fewer parameters require proportionally fewer FLOPs during both forward and backward propagation. The compression results further indicate that models can be trained with 5-10x fewer parameters without performance degradation, compounding the potential efficiency gains.

Yet, these three sources of training inefficiency (retraining overhead, dense forward propagation, and parameter redundancy) do not exhaust the potential efficiency gains. A fourth source arises from how models organize information during the training process itself.

A Library Analogy

Consider a brand new library. When a librarian goes to stock their library, they do not necessarily store every book in the universe in their library. Instead, libraries participate as a part of a library network. In this way, a nation-wide (or even global) community of libraries each store a cache of books, and when one user asks their local library for a book they do not have, that library will call in that book from another library. Through this process, even tiny, rural libraries are (in a way) making a massive, global collection of knowledge available to their local community. Some might even say that a small, local library makes all of humanity’s knowledge available to their local community, even if their local collection is small.

This practice stands in stark contrast to how AI systems are trained. Firms around the world are scraping the internet (or downloading web scrapes) and training their own models from scratch largely on the same information scraped from the internet. In these practices, they are encoding the same information redundantly across many organizations instead of building upon the existing knowledge already encoded into neural weights by other parties. That is to say, AI companies repeat each others’ work to a great degree.

AI models store information within their weights. General-purpose models encode substantial portions of publicly available internet data through training on large, overlapping corpora. Multiple organizations train models on similar or identical datasets, often drawn from common sources such as web scrapes and public repositories. This results in redundant encoding of the same information across independently trained models.

| Category | Computing Power (FLOP/s) |

Share (%) | Source/Calculation |

|---|---|---|---|

| Cloud/AI Providers | |||

| Meta | $1.79 \times 10^{21}$ | 5.57 | From Table 6.3 Total Q4 2024 |

| Microsoft/OpenAI | $1.38 \times 10^{21}$ | 4.29 | From Table 6.3 Total Q4 2024 |

| Google/DeepMind | $1.23 \times 10^{21}$ | 3.81 | From Table 6.3 Total Q4 2024 |

| Amazon/Anthropic | $7.19 \times 10^{20}$ | 2.23 | From Table 6.3 Total Q4 2024 |

| Consumer Computing | |||

| Smartphones | $7.48 \times 10^{21}$ | 23.23 | Sum of Active iPhones/Androids from Table 6.5 |

| PC CPUs/GPUs | $2.23 \times 10^{21}$ | 6.92 | Sum of PC CPUs and GPUs from Table 6.5 |

| Game Consoles | $8.64 \times 10^{20}$ | 2.68 | From Table 6.5 |

| Other Cloud/Pre-2023 | $1.65 \times 10^{22}$ | 51.28 | (see appendix for details) |

| Total | $3.22 \times 10^{22}$ | 100.00 | Sum of all rows above |

From an information-theoretic perspective, this redundancy is inefficient; the question is: how much? Comprehensive measurement would require documenting the overlap in training data and model capabilities across organizations, information that is not systematically available.

However, industry analysis provides order-of-magnitude estimates of aggregate underutilization. The largest AI firm controls less than 5.57% of global computing capacity (Table 2.3). If training resources could be pooled across organizations (analogous to libraries participating in a global network rather than maintaining independent collections) available compute would increase by a factor of approximately 100/5.57 ≈ 17.95x relative to any single firm’s capacity. This represents the theoretical gain from distributed training architectures that enable collaborative model development across organizational boundaries.

Yet, even these four training inefficiencies may not fully describe the inefficiency present in modern AI. To observe the theory behind this next problem, consider the following analogy.

A Library Analogy

Consider a brand new library which doesn’t even have any books in it yet. Let’s say the librarian is in a hurry, and so they take the first book, pick one of the empty shelves, set the book on that shelf, and then run to get the second book. Then, looking at the second book, they ask, ”is this similar to the first book... or different”. And if it’s similar to the first book, they put it closer to the first book on the shelves, and if it’s different from the first book, they put it farther away. This librarian repeats this process over and over until they encounter a problem. After 10,000 books (out of the millions they have to load), one of the bookshelves is full. So, because the shelf they need is full, they run to the next shelf and empty it... throwing books onto the floor to make space for their new book, which needs to be in this location. But now, they need to re-stock the books they just threw on the floor! They then pick up the books from the floor and attempt to find them all new places in the library, accidentally filling up shelves in the process. They then repeat this process many, many times... stocking and re-stocking all the books until a sensible organization emerges.

Or consider another librarian, who is opening a new library. But before they begin stocking books, they set up a Dewey Decimal System. They label each book in the system, count the number of books in each category, and plan their shelf capacity appropriately. Then, they take each book and load it into its appropriate shelf in a single pass. This second technique stands in stark contrast to how AI systems are trained. To add the first training example into an untrained model like GPT-3, AI users forward propagate through the entire model and all of its knowledge and store that information in random locations throughout the model. Then, as more training examples pile into the model, the model experiences catastrophic forgetting (Kirkpatrick et al., 2017) as collisions occur. And to train an entire model like GPT-3 on modern training corpuses (i.e. trillions of tokens), it repeats this process trillions of times.

Quantifying the computational cost of catastrophic forgetting remains challenging due to limited empirical work on information segmentation during training. RETRO and ATLAS provide partial evidence, as does work on curriculum learning and knowledge distillation. Kemker et al. observe that avoiding catastrophic forgetting requires approximately 40x larger model capacity (Kemker et al., 2018), though this estimate is not especially recent. Multiple sources of training inefficiency compound: full model retraining during updates, dense forward propagation through all parameters, parameter redundancy from insufficient compression, and capacity overhead to prevent catastrophic forgetting during sequential training.

These inefficiencies compound multiplicatively in terms of FLOPs. Parameter reuse across model generations could increase compute productivity by a factor of 100/(100 − 98.53%) ≈ 68x. RETRO/ATLAS-demonstrated parameter efficiency provides 25-50x gains. Lossless compression techniques offer an additional 5-10x reduction. Catastrophic forgetting avoidance inflates model sizes by approximately 40x. Combined multiplicatively, these factors suggest potential efficiency gains ranging from (68 × 25 × 5 × 17.95) ≈ 153,000x to (68 × 50 × 10 × 17.95 × 40) ≈ 24,400,000x, representing approximately 5-7 orders of magnitude of potential compute productivity improvement.

| Inefficiency Type | Range | Evidence |

|---|---|---|

| Inference Inefficiencies: | ||

| Full Forward Propagation | 25-50x+ | RETRO/ATLAS |

| Parameter Redundancy | 5-10x+ | Compression |

| Catastrophic Forgetting | 40x | Size Heuristic |

| Training Inefficiencies: | ||

| Re-training from Scratch | 68x+ | Industry Analysis |

| Full Forward Propagation | 25-50x+ | RETRO/ATLAS |

| Parameter Redundancy | 5-10x+ | Compression |

| Siloed Compute | ~17.95x | Global Compute |

| Catastrophic Forgetting | 40x | Size Heuristic |

| Combined Effects: | ||

| Inference Total | 5,000-20,000x+ | Multiplicative |

| Training Total | 6,103,000-24,412,000x+ | Multiplicative |

Taken together, these estimates suggest that current inference practices exhibit inefficiency factors of approximately 5,000-20,000x, while training practices exhibit inefficiency factors of approximately 150,000-24,000,000x. These bounds support the conclusion that at least six orders of magnitude of compute productivity remains unexploited in current AI systems.

Yet even these estimates contain within them one especially conservative estimate, the 25-50x inefficiency from dense forward propagation. While RETRO/ATLAS provides the only concrete lower bound on this inefficiency, the true sparsity opportunity is almost certainly significantly more. To return to the library analogy, what percentage of the world’s collective library is needed to answer a particular question? If one believes that 2-4 percent of all human knowledge is needed for every question, then perhaps the RETRO/ATLAS estimate is accurate.

While systematic measurement is lacking, the assumption that any query requires more than one-millionth of a model’s knowledge base (> 10−6 ) appears conservative, suggesting these efficiency estimates may understate potential gains by an additional 4 orders of magnitude (although this is merely conjecture... future empirical work is needed).

A Full Picture of Compute Waste: The Library Analogy

Consider first how an AI system would operate as a librarian. When someone asks about the rules of chess, this librarian doesn’t merely consult the games section. Instead, they read every single book in the library. Not just once, they do this for every single query. When this AI librarian needs to add a new book to their collection, they don’t simply locate an appropriate shelf using a catalog system. Instead (and this characterizes a fundamental inefficiency in current AI systems) they first read every book in the library, then displace existing books onto the floor to make space, then must re-read everything to determine where to relocate those displaced books. This process repeats, sometimes trillions of times, until the library reaches a new equilibrium. Worse, if a book needs to be decisively removed, the entire library must be burned to the ground, all of the books burned, and a new library constructed from scratch, repeating the entire aformentioned process over again.

Furthermore, this AI librarian doesn’t participate in an efficient network of libraries. Instead, they insist on maintaining their own complete copy of every book in existence, greatly amplifying the challenge of the aformentioned processes. When other AI librarians open new libraries, they too download and store the same vast collection, redundantly encoding identical information in countless separate locations (but also re-paying the cost of learning how to organize their libraries). And when these AI librarians need to modify their collections (to add or remove significant knowledge) they don’t merely reorganize their existing structure. Instead, they retrain from scratch... also equivalent to burning their entire library to the ground, demolishing every book, and rebuilding the complete collection from the ground up (repaying all of the aformentioned costs).

Now consider how human librarians process information. When someone inquires about chess, they navigate directly to the games section, select a relevant text, and locate the rules. When adding a new book, they utilize the Dewey Decimal system to identify the appropriate shelf and place it there. The process is direct, efficient, and purposeful. Moreover, human librarians don’t attempt to store every book in existence in their local library. Instead, they participate in an interconnected system of libraries, each maintaining their own cache of books. When a patron requests a book not locally available, the librarian simply requests it from another library in the network. Through this elegant system, even the smallest rural library can provide access to humanity’s collective knowledge.

The contrast between these approaches illuminates a critical insight about current AI systems. They operate with a level of inefficiency that we’ve somehow normalized within the field. In essence, what we’ve built is a global network of millions of librarians who must read their entire library just to fetch a single book, read it again to add a new book, and then read it countless more times to relocate all the books they displaced in the process. And when they’re not doing this trillions of times over, they’re burning their buildings to the ground, destroying their entire collections, and starting over from scratch. And as the world’s AI compute costs approach the level of a small nation, this practice, despite being ubiquitous within AI research, represents perhaps the greatest inefficiency in the history of information processing. And via this chapter, this thesis will describe existing innovations which could alleviate much of this inefficiency.

6+ OOM: Siloed Data

Following growing rumors across the AI research community that data is becoming a major bottleneck, OpenAI’s former chief scientist, Ilya Sutskever, announced during his test of time award speech at NeurIPS 2024 that data for training AI has peaked, ”We’ve achieved peak data and there’ll be no more” (Robison 2024). However, while this may be true for the AI industry, and Ilya (being recent Chief Scientist at OpenAI) is perhaps one of the best people in the world to know, Ilya’s statement does not reflect the reality of what data exists in the world.

A Library Analogy

Consider a world where libraries could only acquire books through anonymous donations left on their doorstep. No matter how many valuable books exist in private collections, university archives, or government repositories, libraries would be limited to what people voluntarily abandon. In such a world, librarians might reasonably conclude they’re ”running out of books”, even while surrounded by vast, inaccessible collections within surrounding businesses and homes.

This mirrors the current state of AI training. When frontier models like GPT-4 (trained on 6.5 trillion tokens), and Qwen2.5-72B (18 trillion tokens), LLama 4 (30 trillion tokens), (Epoch AI 2024) report hitting data limits, they’re really hitting access limits. They’re not running out of data, they’re running out of data they can freely collect.

2-4 Orders of Magnitude: Text Humans Create By Hand

Dataset sizes for frontier AI models range from publicly disclosed values to industry estimates. GPT-4 was trained on approximately 6.5 trillion tokens, while Alibaba’s Qwen2.5-72B used 18 trillion tokens. The largest reported text dataset, used for Meta’s Llama 4, contains 30 trillion tokens (Epoch AI 2025). Using RedPajama as a reference (Together 2023), each trillion tokens requires less than 6TB of storage, implying that the largest known training dataset (Llama 4) occupies less than 180TB.

| Category & Source | Words (T) | Tokens (T) | Rel. Size* |

|---|---|---|---|

| Web Data | |||

| FineWeb | 11 | 15 | 1.0 |

| Non-English Common Crawl (high quality) | 13.5 | 18 | 1.0 |

| All high quality web text | 45-120 | 60-160 | 4.0-11.0 |

| Code | |||

| Public code | - | 0.78 | 0.05 |

| Private Code | - | 20 | 1.3 |

| Academic Publications and Patents | |||

| Academic articles | 0.8 | 1 | 0.07 |

| Patents | 0.15 | 0.2 | 0.01 |

| Books | |||

| Google Books | 3.6 | 4.8 | 0.3 |

| Anna's Archive | 2.8 | 3.9 | 0.25 |

| Every unique book | 16 | 21 | 1.4 |

| Court Documents | |||

| US federal court documents | 2 | 2.7 | 0.2 |

| *Relative size using Llama 3 = 1 as reference | |||

The scale of untapped data is staggering. As shown in Tables 2.5 and 2.6, stored email and instant messages alone contain over 1,850 trillion tokens, approximately 60 times the largest known training dataset (Cummins 2024). Daily human communication generates approximately 150 trillion tokens, accumulating to roughly 55 quadrillion tokens annually (approximately 1,750 times the scale of frontier training sets).

6+ Orders of Magnitude: Multi-media data broadly

Yet even this vast sea of text represents merely a drop in the ocean of total digital data. While frontier AI models train on curated web scrapes such as Common Crawl (454 TB as of December 2023) (Wikipedia contributors 2024), the Internet Archive’s Wayback Machine alone stores approximately 100 petabytes (Kahle 2024). Meanwhile, global digital data is projected to reach 180 zettabytes by 2025 (Mider 2024; Taylor 2024), six orders of magnitude larger than The Internet Archive and nine orders of magnitude larger than the largest known training datasets.

| Category & Source | Words (T) | Tokens (T) | Rel. Size* |

|---|---|---|---|

| Social Media | |||

| Twitter / X | 8 | 11 | 0.7 |

| 29 | 38 | 2.5 | |

| 105 | 140 | 10.0 | |

| Publicly Available Audio (Transcribed) | |||

| YouTube | 5.2 | 7 | 0.5 |

| TikTok | 3.7 | 4.9 | 0.3 |

| All podcasts | 0.56 | 0.75 | 0.05 |

| Television archives | 0.05 | 0.07 | 0.001 |

| Radio archives | 0.5 | 0.6 | 0.04 |

| Private Data | |||

| All stored instant messages | 500 | 650 | 45.0 |

| All stored email | 900 | 1200 | 80.0 |

| Total Human Communication | |||

| Daily | 115 | 150 | 10 |

| Since 1800 | 3,000,000 | 4,000,000 | $10^5$ |

| All time | 6,000,000 | 8,000,000 | $10^5$ |

| *Relative size using Llama 3 = 1 as reference | |||

A Library Analogy

Consider a national library system. While a single library might proudly maintain millions of books, this represents only a tiny fraction of all written human knowledge. Beyond its walls lie vast corporate archives, government repositories, university collections, and personal libraries. To get a sense of scale — consider the size of a library’s physical building, and compare that to the size of the rest of the physical buildings in a city — each with books and letterboxes and filing cabinets containing all manner of correspondence and record. Each holds unique and valuable information, yet remains inaccessible to the library system not because of physical constraints, but because of attribution and control concerns.

Similarly, when AI companies claim to have ”reached peak data,” they’re really saying they’ve exhausted what they can freely obtain (often without permission or attribution). The actual digital data of the world — in private databases, corporate systems, government archives, and personal devices — remains largely untapped, representing over six orders of magnitude more information than current AI systems can access.

The magnitude of this disparity is difficult to comprehend. While the largest known AI dataset (to the awareness of this researcher) is roughly 180 TB of information, and may be derived from a dataset as big as common crawl (450 TBs of information), or if we were very, very conservative, might be as big as the full history of the entire publicly indexable internet (and other data the Internet Archive keeps) 100 PBs, even this number is 6+ orders of magnitude smaller than the amount of digital data in the world. Taken together, even under highly conservative estimates, it is very likely that AI has not yet trained on even one millionth of the amount of data that humanity has digitized. And beyond what humanity has digitized lies the vast amounts of information which is not yet encoded into a computer.

Consider the 150 trillion tokens humans create every day, the zettabytes-worth of yet-to-bevideoed information going on across the planet and all of its inhabitants which (despite helping living creatures get smarter day-in-and-day-out) is completely inaccessible to systems which only read digital information. This striking disparity between used and available data raises a crucial question: why, in an era of unprecedented digital abundance, do AI systems train on such a microscopic fraction of all knowledge? The answer lies not in easily observable symptoms like data availability, but in fundamental problems underpinned by insufficient ABC.

The Search for Root Causes (Three "Whys")

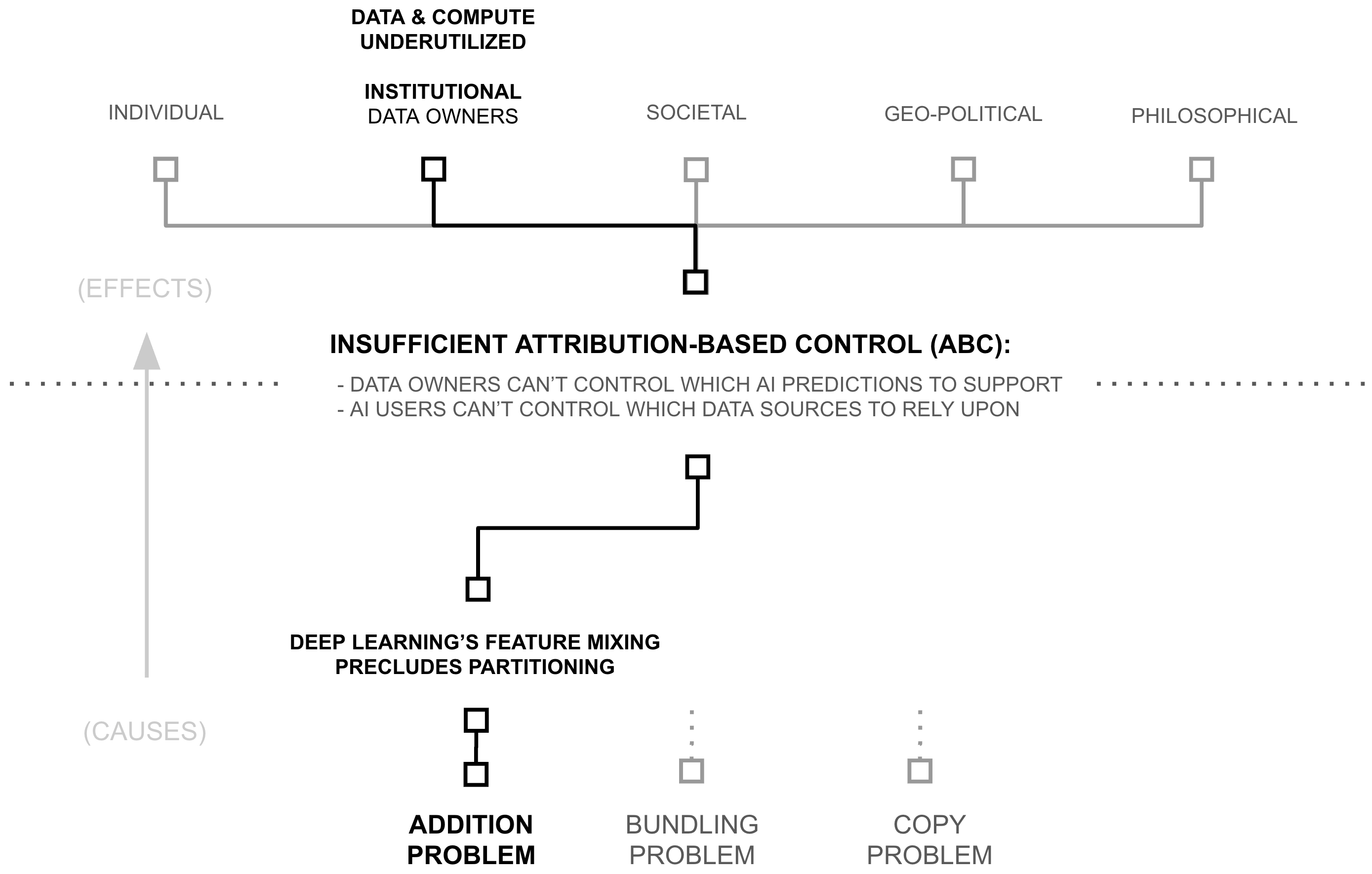

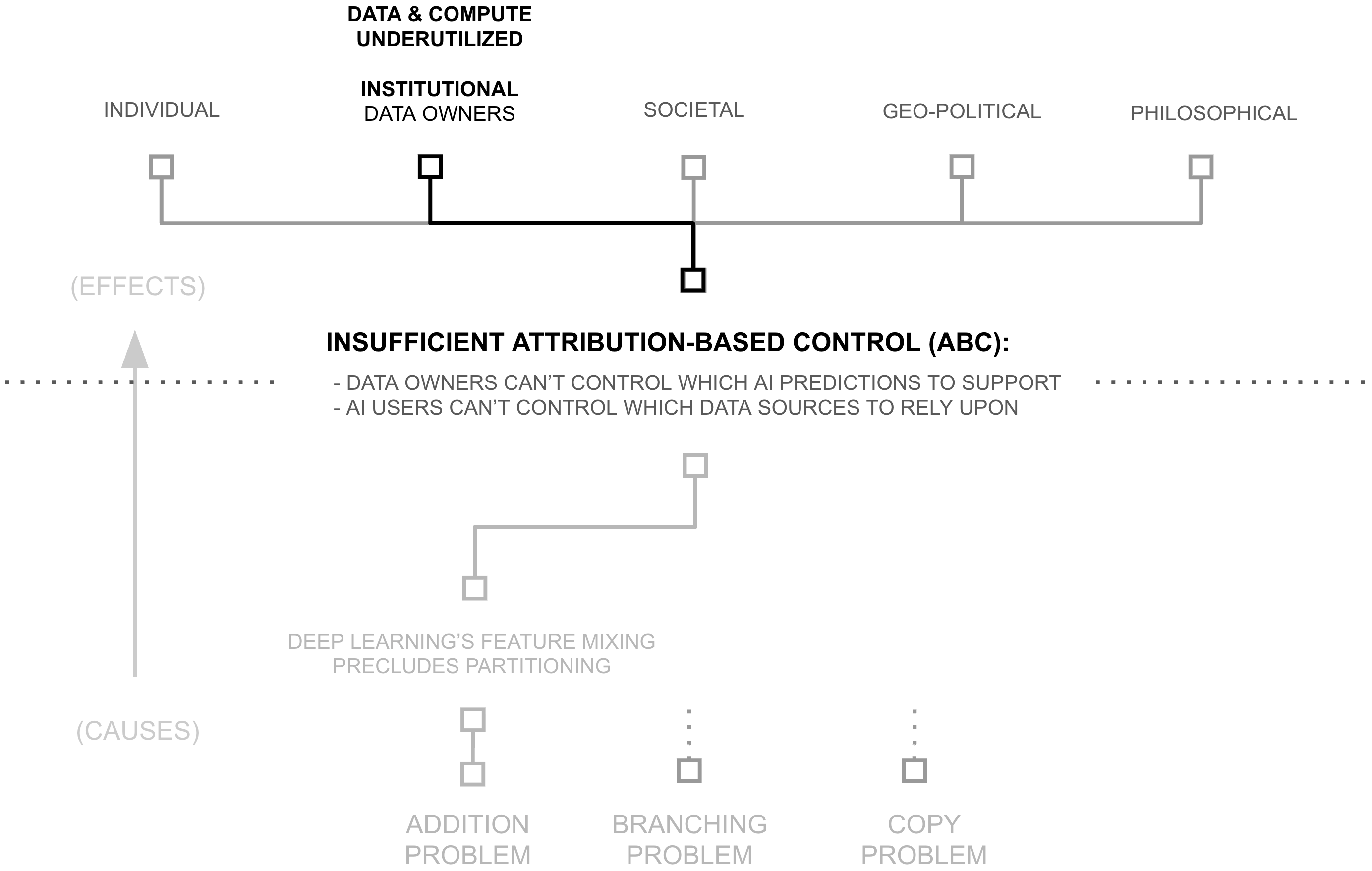

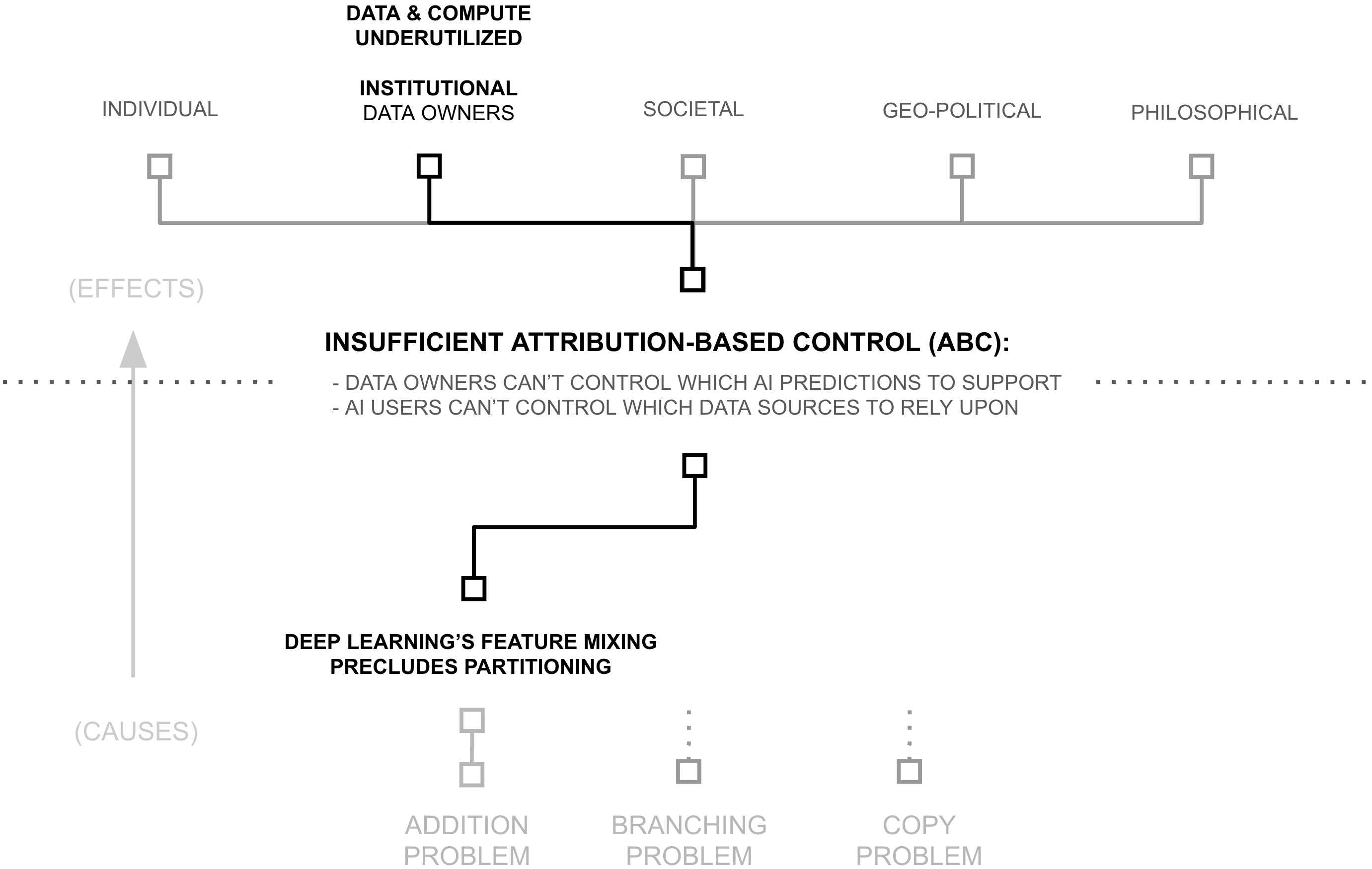

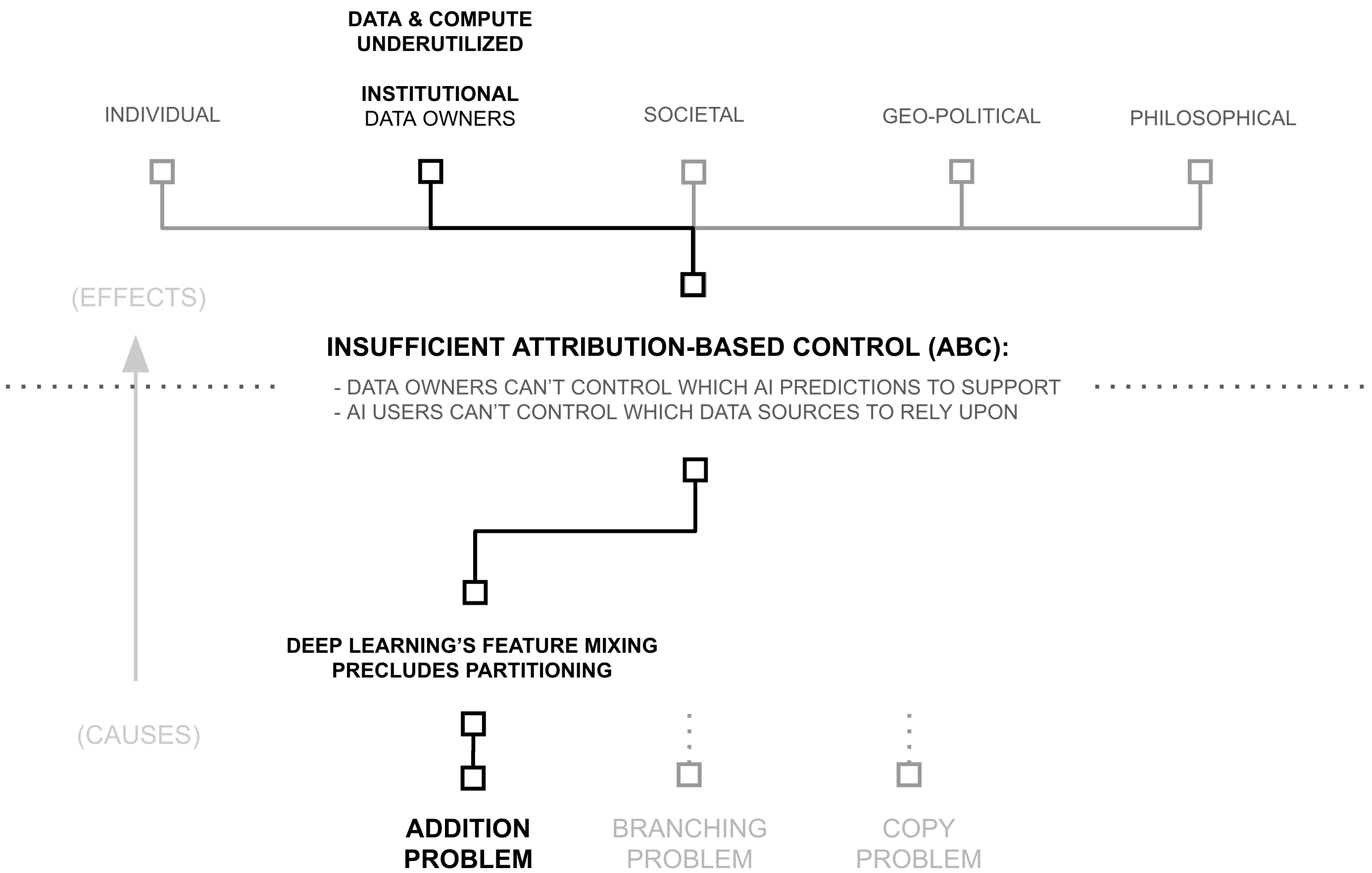

The previous section revealed a paradox: despite widespread beliefs of data and compute scarcity, AI systems access less than one millionth of digital resources, and an untold microfraction of the world’s information broadly. This under-utilization raises a critical question: if more data and compute directly improves AI capabilities through scaling laws, why do AI systems use such a tiny fraction of what’s available? The answer lies in a cascade of technical and institutional barriers, each revealing a deeper ”why” that must be understood:

- First Why: Attribution-based Control

- Second Why: Deep Learning's Feature Mixing Precludes Partitioning

- Third Why (Root Cause): Addition of Source-Separable Concepts

As we follow this chain of questions, we’ll see how each answer reveals a deeper technical challenge. More importantly, we’ll discover how recent breakthroughs in cryptography, deep learning, and distributed systems have already created solutions to these challenges (solutions which remain largely unrecognized by the AI community).

First Why: Attribution-based Control

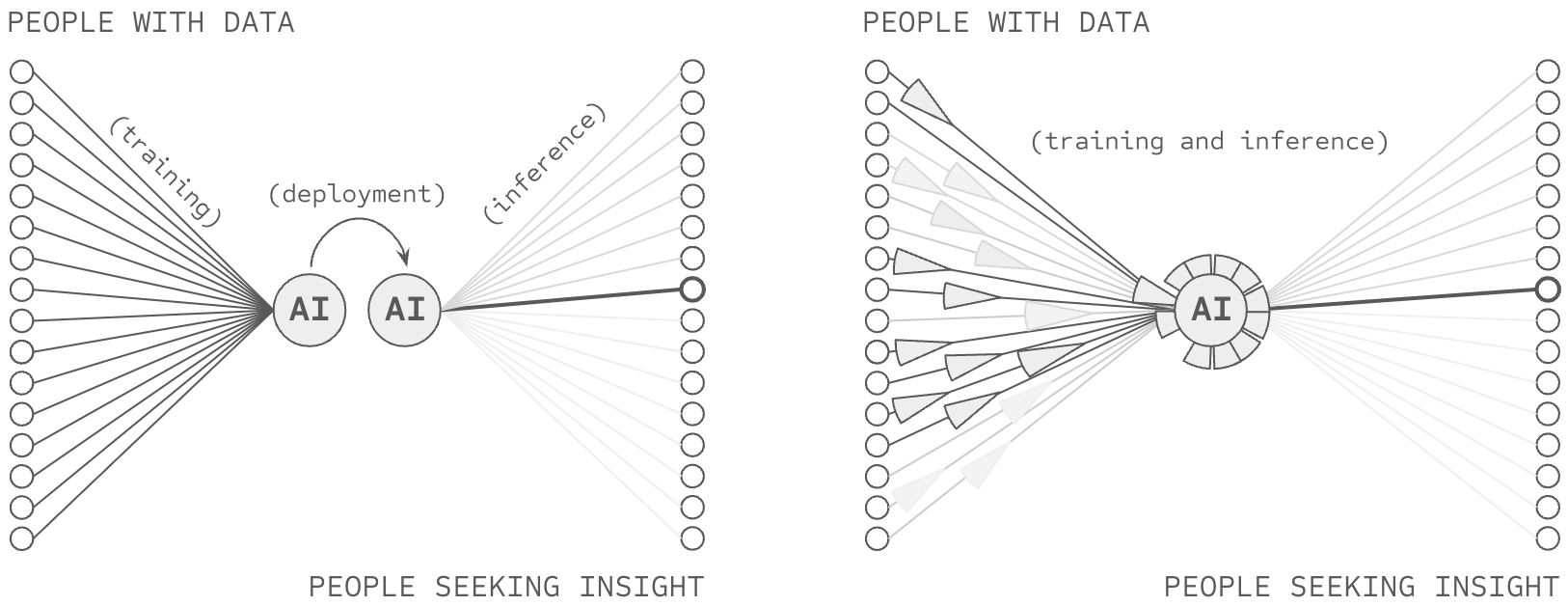

The previous section revealed significant inefficiencies in the training of AI systems: 6+ orders of magnitude in underutilized data and compute. While there may be multiple contributing factors to these constraints, this thesis and chapter examines one particular root cause: AI’s inability to provide attribution-based control (ABC). An AI model possesses attribution-based control when two properties are true: data sources control which AI predictions they support, AI users control which data sources they rely upon for an AI prediction. Following this definition (Definition 1.1.1), ABC implies certain architectural properties as novel requirements:

- Source-Partitionable Representations: Knowledge within an AI system is

partition-able

by source, otherwise sources lose control when their information is mixed, requiring:

- Source-Partitionable Inference: Partitions are independently usable at inference, otherwise users can’t select specific sources and sources can’t participate irrespective of the decisions of other sources

- Source-Partitionable Training: Partitions are independently trainable, otherwise sources can’t update their contributions without requiring other sources to do so.

- Rapid Partition Synthesis: Partitions are rapidly synthesize-able during inference, otherwise collective insights which are only learnable via information from multiple sources cannot be realized in production AI systems.

Frontier AI systems lack these properties. Yet, if these properties were achieved, attribution would be achieved and aforementioned problems regarding data and compute productivity would be impacted. Let’s examine each in detail, linking each problem to attribution-based control.

ABC and Compute Productivity (6+ OOM)

ABC would address compute productivity issues along two dimensions: access and learning structure. Regarding structure, successful ABC would necessarily provide a means to structure the learning and representation process, reducing re-training, forward propagation, redundancy, and catastrophic forgetting. Regarding access, ABC would provide a means to overcome incentive issues presently siloing the world’s compute resources. Let us consider these claims in the context of inference and learning.

2-3 OOM: Inefficient AI inference

A Library Analogy

Consider a library wherein a librarian reads every book in the library whenever they need to answer a question, including reading multiple copies of the same book and pretending to read an empty book whenever shelves contain empty sections.

A solution to attribution-based control would necessarily reconfigure the library to fetch information based on its source (book or author). While such a solution might be challenging, if it successfully delivered capable predictions, it would necessarily do so while skipping an enormous amount of wasted computation. This is because ABC is not actually about selecting the sources one desires (normal full forward propagation already does this), its actually about ignoring all the books you don’t want. ABC requires making an AI inference while skipping an enormous amount of wasted computation, because (to return to the analogy) it would involve training the librarian on how to skip reading the entire library when making a prediction... and only fetch information from specific, relevant sources (i.e. books).

Thus, while ABC’s definition might not appear to require an increase in computational efficiency, its definition directly requires that a vast amount of information not be included in the computational process, reducing the need to compute over that information.

Recall that current AI models must activate vast numbers of parameters for every prediction because they struggle to pre-identify which parameters are storing relevant information to the present query; they struggle with sparse forward propagation. While various approaches to sparse AI exist, successful ABC would necessarily enable one particularly compelling form of sparsity: source-based sparsity (i.e. source-based partitioning). Consequently, if an AI model could forward predict based on a relevant subset (i.e., the top 10) of many (i.e. billions) of sources, then it is very likely to also greatly reduce the computational complexity involved in forward propagating, because it would be forward propagating through vastly less information.

RETRO and ATLAS demonstrate the minimum scale of such a breakthrough. By maintaining source-based partitions through their database architecture, they achieve equal performance while activating only 2-4% of the parameters of similarly performant dense models (Borgeaud et al., 2022; Izacard et al., 2023).

Similarly, recall how current models process some combination of redundant copies of knowledge and empty regions of parameter space during inference. While there are many approaches to reducing these forms of waste (e.g., distillation, compression, etc.), successful ABC would necessarily enable one particularly compelling way to avoid copies or empty space: source-based partitioning. If an AI model could forward predict based on a relevant subset (i.e., the top 10) of many (i.e., billions) of sources, then tuning the number of sources being relied upon could also tune the redundancy being used in forward propagation. Meanwhile, ensuring that the partitions being leveraged for forward propagation were relevant to the query would combat the risk of forward propagating empty space 2.

RETRO and ATLAS demonstrate these principles through their database architecture, showing how source-based organization can naturally eliminate redundant processing and avoid computation on irrelevant parameters while maintaining model capability. They also demonstrate the ability for such partitioning to increase the number of samples being used against a dense (i.e. non-partitioned) section of the network, reducing empty space. Yet as significant as these inference inefficiencies are, they pale in comparison to the waste in how AI systems learn.

6+ OOM: Underutilized and Inefficient Compute in AI Learning

Recall that current AI models must retrain entirely from scratch when updating their knowledge, because of problems such as catastrophic forgetting (Kemker et al., 2018). While various approaches to incremental training exist, successful ABC would necessitate one particular solution: source-partitioned retraining. Consequently, if an AI model can train source-separated subsections of its weights, it can re-train them as well, and it is very likely to also greatly reduce the computational complexity involved in the re-training process, because it would be re-training vastly fewer parameters at a time. RETRO/ATLAS demonstrate one such approach, wherein re-training can be done with the computational complexity involved in adding or removing vector-embeddings from a database.

Similarly, recall how current training processes waste compute in multiple ways: through redundant copies of the same knowledge, through activation of irrelevant parameters, and through inefficient parameter density. While various approaches to training efficiency exist, successful ABC would necessarily enable compelling means to overcome these problems (as already described in the previous section describing ABC’s impacts on inference).

Additionally, these ABC opportunities further compound when we consider how compute is distributed across organizations. Consider our library analogy once more: libraries don’t each maintain copies of every book ever written; they form networks to share resources efficiently. In contrast, frontier AI models are created by a host of organizations around the world, each re-paying the cost of creating an AI model (most of which are trained on largely the same data).

Successful ABC may activate an economic incentive addressing this waste 3 . At the present moment, frontier AI models are economic bundles, marketed under a story which itself is an economic bundle: artificial general intelligence. Because of this, if one AI company re-trains the capabilities of another company (e.g. spending $200M on compute) and then adds a bit more to it (e.g., another $10M in data and compute), an end user must then choose between that full economic bundle and another full economic bundle. This creates a situation wherein companies effectively have to pay the minimum amount to catch up to the leading position and then extend some beyond it.

2 Additionally, if successful ABC reduced the number of parameters being used as a result of these other techniques, then it would also increase the number of samples being applied to each set of dense parameters — perhaps reducing the opportunity for empty vector space (more on this later)

3 This hypothesis has been somewhat validated by OpenMined in early pilots of ABC-enabled AI systems with publishers, but this research is as-of-yet unfinished

A Library Analogy

Consider a new library which doesn’t yet have any books. To load the library in the style of AI, a librarian would first build tens-of-thousands of libraries and print copies of books into each one (i.e. signifying both the redundant training of models by many companies and the hyperparameter sweeps occurring within each one). Then, within each library, a librarian would first load all the shelves (of which there are a fixed number) books containing random strings of letters (i.e., initialize a model randomly). Then, the librarian would select the first book to load into the library, pretend to read every word in every one of the random books, and after that was done, select which book to replace with the book being loaded into the library, casting the book being replaced onto the floor. The process would repeat until all of the books had been loaded... with a catch. Each time the book being thrown on the floor wasn’t a random book (but was a real book) that real book then needs to go through the process again itself. And in the end, if the library wasn’t big enough to contain all the books, all the libraries would be destroyed, all the copies of books burned, and everyone would re-build bigger libraries to hold the vast and growing collection of books.

A solution to attribution-based control would necessarily reconfigure the library to load information based on its source (book or author). While such a solution might be challenging, if it successfully delivered capable predictions, it would necessarily do so while skipping an enormous amount of wasted computation. Instead of each library creating a collection big enough to hold the world, the vast collection of the world’s books could be divided among the libraries (perhaps with some mild redundancy). Each library could then organize their books by the dewey decimal system, measuring how big each section in their library needs to be in order to hold the sections they are meant to store. After these measurements were completed, the building could be constructed, the books loaded in their proper places, and the job could be completed.

And in this way, the librarian could avoid storing all the world’s information in their own library, re-building libraries from scratch, reading all the books in the library over and over, loading in many copies of the same book, loading in empty or random books, and re-loading books which no longer fit on the shelves they’re loading. Taken together, while ABC’s definition might not appear to require an increase in computational efficiency, its definition directly requires that a vast amount of information not be processed during iterative steps in the training process, reducing the need to compute over that information. In some cases, ABC implies the elimination of iterative processes altogether.

However, successful ABC would modularize the initial capability, such that some percentage of the original $200M could be inherited from previous models and then extended with new capabilities. Given the immense costs involved, such a modularization breakthrough would constitute an enormous economic pressure. If firms pedantically chose to re-pay the cost to train their own from scratch, recreating 90% of the modules which already exist in the market, they would incur very high costs which must correspond with very high prices to recoup those costs. Inversely, a startup which came along and inherited the 90% produced by others, paying only for their specialization, would (all else equal) be able to charge lower prices, and win in the market.

From a compute efficiency standpoint, the prospect of cross-market weight-reuse translates directly into the sharing of compute costs for training AI systems. Industry analysis reveals the potential impact: no single AI provider controls more than 5.57% of global compute capacity. Thus, since source-based partitioning could unlock this siloed compute by enabling controlled sharing of specialized knowledge, it could increase effective compute by 17.95x (100/5.57) or more because organizations would waste fewer resources re-computing features which are already commoditized in the market. Taken together, economic unbundling would plausibly drive specialization and more efficient use of compute resources in the market.

While various approaches to these inefficiencies exist, solving ABC would necessarily enable one comprehensive solution path. Note that this is not saying that ABC is the solution, merely that ABC is difficult because it would involve solving these other difficult challenges... because ABC requires that sources maintain control over their contributions while enabling rapid synthesis. Taken together, any solution to ABC solution must provide:

- Selective retraining instead of full rebuilding (68x+ improvement)

- Efficient computation during training (25-50x+ improvement)

- Reduced parameter redundancy through source-based organization (5-10x+ improvement)

- Specialization with controlled sharing (17.95x+ improvement)

- Organization averting catastrophic forgetting (17.95x+ improvement)

Industry analysis and empirical results suggest the combined impact could be dramatic. When these improvements compound multiplicatively, they point to potential training efficiency gains of 6+ orders of magnitude. Yet these numbers, as striking as they are, point to something more fundamental: our failure to maintain attribution in AI systems coincides with a broader acceptance of wasteful practices as inevitable. More than a technical issue, the inefficiency of AI training is a symptom of how we’ve structured AI computation. While other solutions may exist, attribution-based control offers one path to reimagining how AI systems learn and compute, potentially unlocking orders of magnitude more efficiency in the process.

How Failing ABC Siloes Data (6 OOM)

Recall that current AI models can only train on data they can access, which is dominated by data available to the public over the internet. Consequently, AI models almost certainly train on less than 1/1,000,000th of the digitized information in the world because they cannot access the other 99.9999%, which remains hidden amongst the world’s 360 million companies, 8+ billion citizens, etc. (Bogwasi 2025).

While various approaches to data access exist, successful ABC would necessarily enable one compelling solution: controlled data sharing. We take as an assumption that the world’s data owners have some uses in the world they would support (for which their data could be useful). We take as a second assumption that a significant portion of those sources are hidden because the incentives are not sufficient for them to support — or more crucially because the negative consequences would be too great, that their data might not just activate the uses they wish to support, but that it would also activate mis-uses (concerns regarding privacy, security, IP, competition, legal risks, etc.).

Successful ABC would necessarily enable one particularly compelling form of data sharing. The ability for a data source to decide which AI predictions to support is (almost tautologically) the ability for a data source to enable uses while averting mis-uses. Consequently, AI empowered by ABC may activate vastly more data than is presently available. One could argue that truly successful ABC would constitute an incentive shift attracting all of the world’s data to be pulled into at least some AI predictions.

The potential impact is staggering. While frontier AI models train on carefully curated web scrapes on the order of 180 TBs (and the public web plausibly less than common crawl’s largest copy, 450 TBs) the world’s total digital data is estimated to reach 180 zettabytes by 2025. This six-to-nine orders of magnitude difference represents all the data locked behind organizational boundaries, including some data of immense value (e.g. financial data, health data, environmental data, etc.).

RETRO and ATLAS demonstrate part of potential path forward, demonstrating a type of partial ABC at scale by training AI models which query from a database. Certain extensions (such as those suggested in this thesis) could take this further to enable full attribution-based control, and the shifting of incentives around data sharing.

Synthesis: Attribution as a Path Forward

The previous sections revealed two significant inefficiencies in current AI systems: 6+ orders of magnitude waste in compute and 6+ orders of magnitude in untapped data. While there may be many approaches to addressing these inefficiencies, this thesis focuses on attribution-based control as one solution path. As we’ve seen, solving ABC (if such a solution exists) would necessarily enable both efficient compute through source-based partitioning and broad data access through attribution preservation.

Yet this raises a deeper question: if ABC offers such compelling benefits, why don’t current AI systems maintain attribution? The answer, as we’ll see in the next section, lies in how neural networks fundamentally process information. The unconstrained mixing of features during training makes it impossible to partition knowledge by source, revealing our second ”why”: deep learning’s feature mixing precludes the very partitioning that ABC requires.

Second Why: Deep Learning's Feature Mixing Precludes Partitioning

The previous section revealed how solving attribution-based control would necessarily enable massive data and compute gains in AI systems. Yet this raises a deeper question: why do current AI systems fail to maintain attribution in the first place? The answer lies in deep learning’s foundational premise: algorithms should learn everything from scratch through layers of (largely) unrestricted feature mixing on raw data (Goodfellow et al., 2016).

This commitment to unrestricted learning manifests in how neural networks fundamentally process information. Through operations that combine and mix information at every step (from layer computations to weight updates to knowledge accumulation) neural networks create increasingly complex representations of patterns in their training data. While this flexibility enables powerful pattern recognition, it creates a fundamental problem: features become stored in a disorganized, obfuscated way within the deep learning model... a black box.

Consider what happens when a neural network learns to recognize cats. Rather than storing clear, interpretable features like ”pointy ears” or ”whiskers”, the network distributes this knowledge across its weights in complex, entangled patterns (Le 2013). This unrestricted mixing of features makes post-hoc source-based partitioning impossible (at the present moment):

- Features can’t be attributed to specific sources (preventing data control)

- Knowledge can’t be updated independently (requiring full retraining)

- Computation can’t be selectively activated (forcing dense inference)

- Resources can’t be efficiently shared (blocking specialization)

The research community has made extensive efforts to address these limitations. Recent work has attempted to trace predictions back to training data through influence functions, remove specific datapoints’ influence through machine unlearning, and develop various attribution methods to reverse engineer the source-prediction relationship (Nguyen et al., 2024). Yet despite these attempts, both influence functions and unlearning remain unsolved challenges in the literature. So far, the relationship between sources and predictions has been irreversibly compressed during training, and no amount of post-training intervention has successfully restored these lost connections.

The consequences are severe. New models like GPT-5 cannot inherit features from predecessors like GPT-4... they must relearn basic patterns from scratch. Even during inference, models must activate vast parameter spaces for every prediction, unable to pre-identify which features are relevant (and only forward propagate those features). These inefficiencies aren’t mere implementation details that clever algorithms might solve. They stem from something more fundamental about how deep learning processes information.

Yet this raises an even deeper question: why does feature mixing obfuscate attribution-based control? The answer, as we’ll see in the next section, traces back to deep learning’s most basic mathematical operation and how it fundamentally precludes the partitioning that ABC requires.

A Library Analogy

Consider a library wherein all of the books have had their covers removed, their table of contents erased, and their chapters torn out and shuffled amongst all the books. Consequently, when someone wants to answer a specific question, they have to read through the entire library searching for relevant information for their query.

Deep learning stores information in a similar way, with so-called distributed representations spreading concepts across many neurons... each of which is unlabeled (i.e. ”hidden”). Far from an accident, this form of learning is at the center of deep learning’s core philosophy, the unrestricted learning of dense, hidden features.

Third Why (Root Cause): Addition of Source-Separable Concepts

The previous section revealed how deep learning’s feature mixing precludes the partitioning required for attribution-based control. Yet this raises our final ”why”: what makes this mixing fundamentally irreversible? The answer lies in deep learning’s most basic mathematical operation: addition.

Addition might seem like an implementation detail, but it fundamentally prevents recovering source information. When values combine through addition, the result does not uniquely determine its inputs—multiple distinct source combinations produce identical outputs:

Non-Injectivity of Addition

Addition is not injective: for any sum y, there exist infinitely many distinct pairs (x1, x2) and (x'1, x'2) such that x1 + x2 = y = x'1 + x'2 where (x1, x2) ≠ (x'1, x'2).

This non-injectivity means that observing the sum provides no information about which specific sources contributed. Consider the contrast with concatenation:

Concatenation vs Addition

Concatenation preserves sources:

"1" ⊕ "6" = "16"

"2" ⊕ "5" = "25"

(distinct inputs → distinct outputs)

Addition erases them:

1 + 6 = 7

2 + 5 = 7

(distinct inputs → identical outputs)

When partitions are known, concatenation of numbers is injective; different inputs produce different outputs, allowing source recovery. Addition is not; the output 7 could arise from 1 + 6, 2 + 5, 3 + 4, 0 + 7, or infinitely many other combinations. This non-injectivity is the mechanism through which deep neural networks erase attribution information: once gradients from different sources are summed into shared parameters, no function of those parameters can recover which sources contributed what information.

And neural networks use addition extensively: combining features between layers, aggregating gradients during backpropagation, and updating weights during training. Each addition irreversibly combines information, destroying the provenance of where that information came from and how it was interpreted.

A natural solution might seem possible: why not just track every operation during training ... while also doing these additions? Unfortunately, this approach fails for three fundamental reasons:

First, in models like GPT-3, through forward and backward propagation, each example’s information eventually touches every weight in the network. Even tiny weight changes alter how future examples flow through the network, creating cascading effects that can amplify initially small influences (related: vanishing and exploding gradients (Hochreiter 1998; Hanin 2018)).

Second, these influences compound exponentially and recursively, creating higher order all-to-all relationships between inputs and weights as they do. Consider the mathematics of weight updates for a weight update function g, weights and data at time t as w t and x t :

w t+1 = g(wt, xt)

w t+2 = g(g(wt, xt), xt+1)

w t+3 = g(g(g(wt, xt), xt+1), xt+2)

The number of potential attribution paths grows as Ω(w · n)t , where w is the number of weights, n is the number of examples, and t is the number of steps.

Third, this exponential growth makes exact attribution computationally intractable. The most capable language models frequently leverage just under 1% of their parent organization’s AI training budget (see Appendix I and II for details). Thus, tracking the full web of dependencies (every interaction, every update, every influence path) would require many orders of magnitude more compute than is available to the largest tech firms.

These three barriers (information loss through addition, exponential propagation of influences, and computational intractability) combine to create a fundamental limitation. No amount of clever engineering can fully recover what addition has destroyed. This mathematical reality explains why attempts at machine unlearning and influence functions remain fundamentally limited: they try to reconstruct what addition has already erased.

The Root Problem: The implications are significant. Without the ability to track sources through training, we cannot provide the attribution that ABC requires. Without attribution, we cannot enable the partitioned sharing and use of data and compute that could unlock orders of magnitude more AI resources. Addition itself blocks the very data and compute gains described earlier in this chapter, and holds up the many problems described in Chapter 1.

A Library Analogy

Consider a library wherein all of the books have had their covers removed, their table of contents erased, and individual sentences on each page torn out into their own strips. Now imagine that each word in each strip is converted into a number ”aardvark = 1”, ”abernathe = 2”, and so forth. And then imagine that each of these strips from the whole library is shuffled around, and groups of strips are placed back in the coverless books. Yet, instead of each strip being glued in place, it is first combined with many other strips, adding their respective numbers together. Consequently, when someone wants to answer a specific question, they have to read through the entire library searching for relevant information for their query, first by converting their query into numbers and then attempting to match it to numbers found in the books.

Deep learning stores information in a similar way, with so-called distributed representations spreading concepts across many neurons... each of which is unlabeled (i.e. ”hidden”). Far from an accident, this form of learning is at the center of deep learning’s core philosophy, the unrestricted learning of dense, hidden features which are formed through many successive additions.

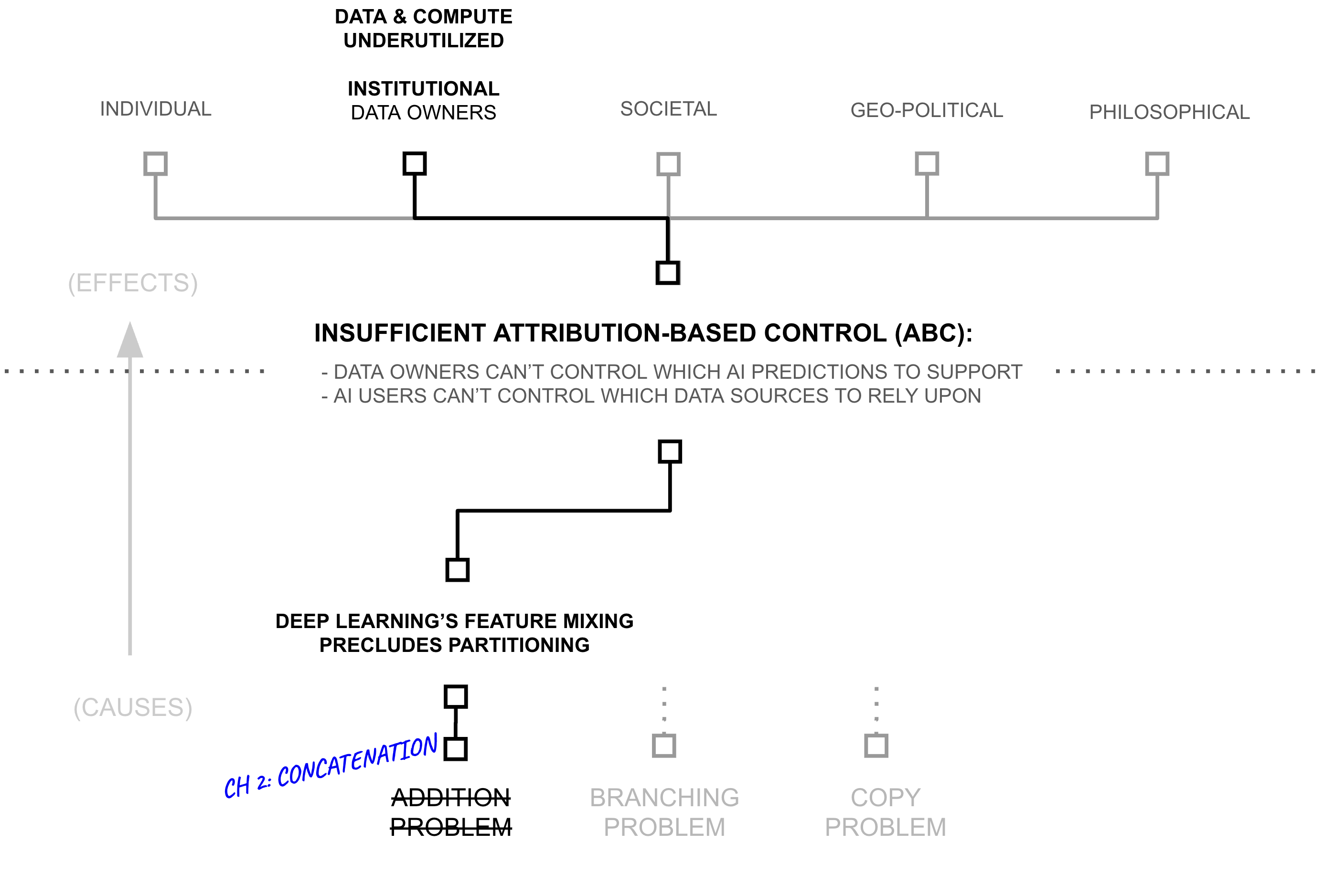

Third Hypothesis (Root Solution): Concatenating Along Natural Boundaries in Data Sources Enables Attribution

We now continue by constructing a hypothesis (the “Third Hypothesis”) which corresponds to the Third Why, which will support the Second Hypothesis addressing the Second Why and so forth. The previous sections revealed how addition in deep learning creates a fundamental barrier to attribution. Yet examining why addition fails suggests a testable hypothesis: can we significantly reduce the use of addition, perhaps swapping it with concatenation?

Deep learning’s central hypothesis would suggest we can’t, that features need to densely mix (using addition) in order to learn the powerful correlations and representations that give deep learning its predictive capability. However, presumably deep learning maps multiple distinct concepts into a shared feature when those two concepts are related (Krizhevsky et al., 2012). For example, a deep learning model which classifies images might have features which detect ears, fur, and eyes — features which would be useful for modeling many different animals which possess these related concepts (Zeiler and Fergus 2014). However, as these features are not laid out in advance, deep learning needs to densely mix its features in order to discover these related patterns across training datapoints. That is to say, perhaps over-zealous dense feature mixing is more about training than inference

Yet, perhaps deep learning is over-zealous in its feature mixing, opening itself to representation power which could mix any feature with any other when in the real-world not all concepts are closely related. That is to say, not all concepts require that level of general representation power. Perhaps some concepts are actually unrelated to one another, such that some proportion of dense feature mixing in deep learning is superfluous

That said, some concepts are densely mixed, while others are clearly less so. Some information patterns appear ubiquitously: basic rules of grammar that structure language, logical operations that appear in reasoning, morphological patterns which make up words, edges and corners in images, etc. Elements like these are frequently reused across almost every data point, appearing in many billions of documents, images, audio, and videos. Such dense patterns suggest unrestricted mixing through addition may be appropriate for a core subset of features. Their ubiquity also makes attribution less critical; they represent shared computational tools rather than source-specific claims about the world. While perhaps not formally stated, noted researcher Andrej Karpathy recently suggested a similar concept when referring to a future LLM “cognitive core”:

The race for LLM “cognitive core”—a few billion param model that maximally sacrifices encyclopedic knowledge for capability. It lives always-on and by default on every computer as the kernel of LLM personal computing. Its features are slowly crystalizing:

- Natively multimodal text/vision/audio at both input and output.

- Matryoshka-style architecture allowing a dial of capability up and down at test time.

- Reasoning, also with a dial. (system 2)

- Aggressively tool-using.

- On-device finetuning LoRA slots for test-time training, personalization and customization.

- Delegates and double checks just the right parts with the oracles in the cloud if internet is available

It doesn’t know that William the Conqueror’s reign ended in September 9 1087, but it vaguely recognizes the name and can look up the date. It can’t recite the SHA-256 of empty string as e3b0c442..., but it can calculate it quickly should you really want it.

What LLM personal computing lacks in broad world knowledge and top tier problemsolving capability it will make up in super low interaction latency (especially as multimodal matures), direct / private access to data and state, offline continuity, sovereignty (“not your weights not your brain”). i.e. many of the same reasons we like, use and buy personal computers instead of having thin clients access a cloud via remote desktop or so.

— Andrej Karpathy[link]

In contrast, perhaps most information is encyclopedic and appears sparsely: specific facts about the world, domain expertise in particular fields, claims made by individual sources, etc. The capital of France, the rules of chess, statistics about pizza... each appears in distinct contexts with limited overlap. As Chomsky noted in linguistics (Chomsky 2014), while we use common patterns to express all knowledge, the knowledge itself often remains naturally partitioned by topic, and when documents are topic specific... by source.

Let us assume for a moment that this is true. If so, then in theory some section of a neural network could be made sparse, namely the part of the neural network which stores concepts which are largely decoupled from the rest of a neural network’s knowledge (facts, domain expertise, semantic information, etc.). Perhaps this section could use less addition (and more concatenation), enabling sparsity which could drive attribution. Meanwhile, another part of the neural network might need to remain dense, storing and synthesizing concepts which are ubiquitous across a statistical distribution (logic, reasoning, syntax, etc.).

The key question: how would one go about training a neural network which successfully partitioned information into sparse and dense sections?

A key insight of this chapter is that techniques from privacy-preserving machine learning, particularly differential privacy (DP) (Dwork et al., 2006), provide a principled way to measure and control which features benefit from dense mixing versus sparse representation. Differential privacy quantifies how much a model’s outputs depend on any individual training example:

(ε, δ)-Differential Privacy

A randomized mechanism $\mathcal{M}: \mathcal{D} \to \mathcal{R}$ satisfies (ε, δ)-differential privacy if for all adjacent datasets $D, D' \in \mathcal{D}$ (differing in one example) and all subsets of outputs $S \subseteq \mathcal{R}$:

$\Pr[\mathcal{M}(D) \in S] \leq e^\epsilon \cdot \Pr[\mathcal{M}(D') \in S] + \delta$

where small ε indicates strong privacy—outputs barely depend on any individual example.

The parameter ε provides a quantitative measure of individual example influence on outputs. This same measure can serve three distinct control objectives:

Three Regimes of Influence Control

For a mechanism M, examples e, and thresholds 0 < τmin < τmax:

- Privacy (constrain influence): Enforce εe < τmin for all examples, guaranteeing that individual examples cannot be distinguished through their influence on outputs

- Measurement (track influence): Compute εe for each example, enabling quantification of which examples influence which outputs, without enforcing bounds

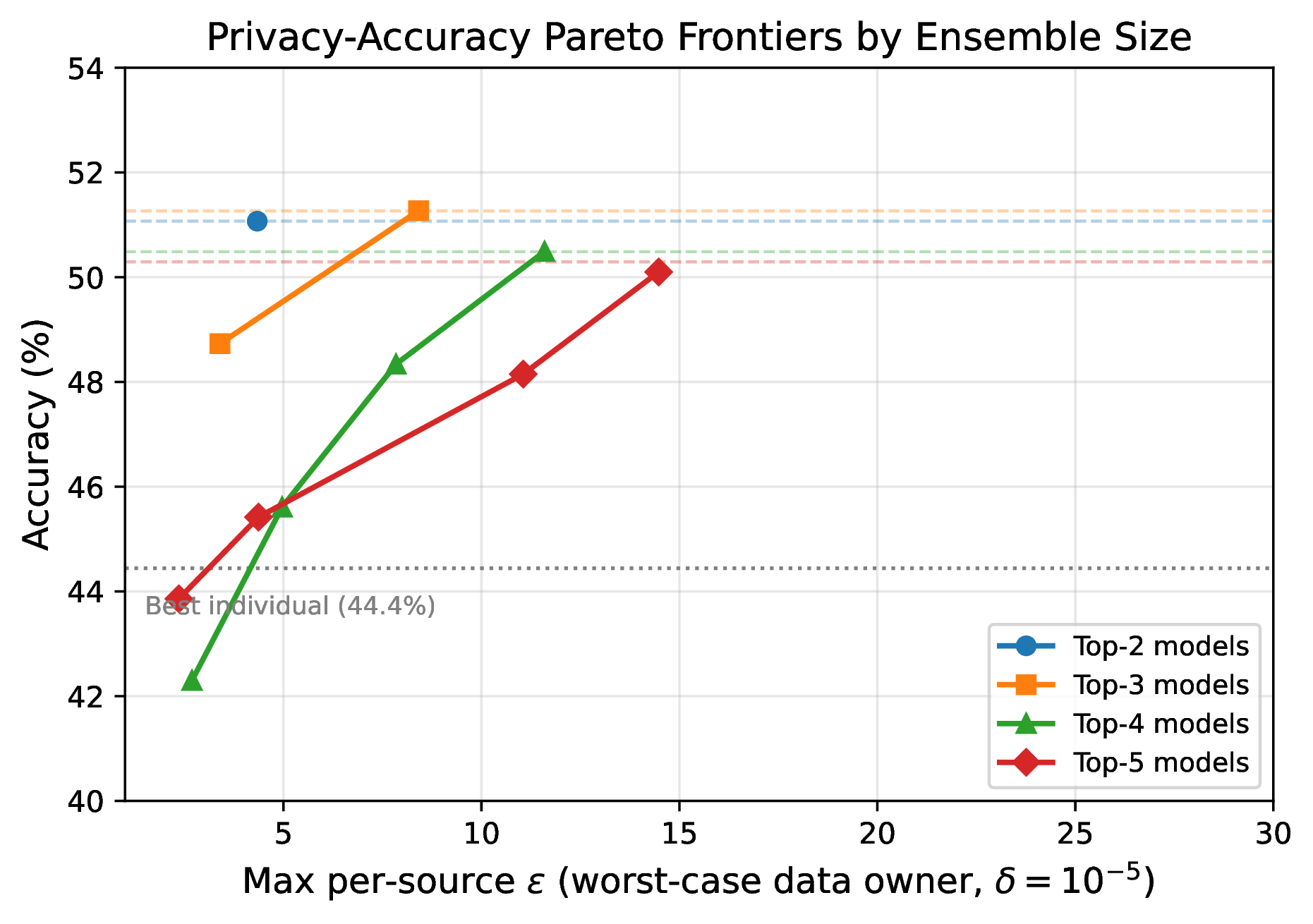

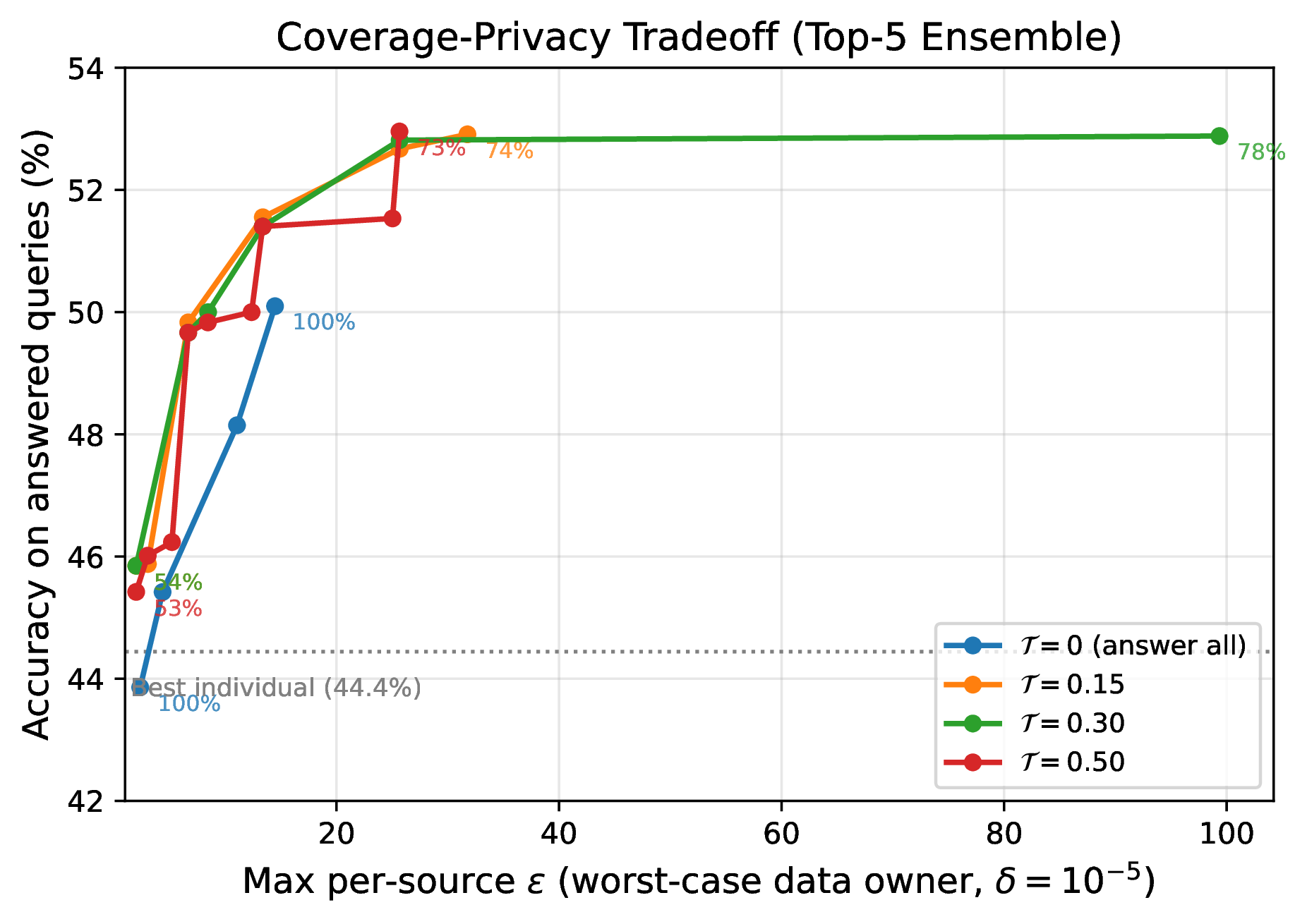

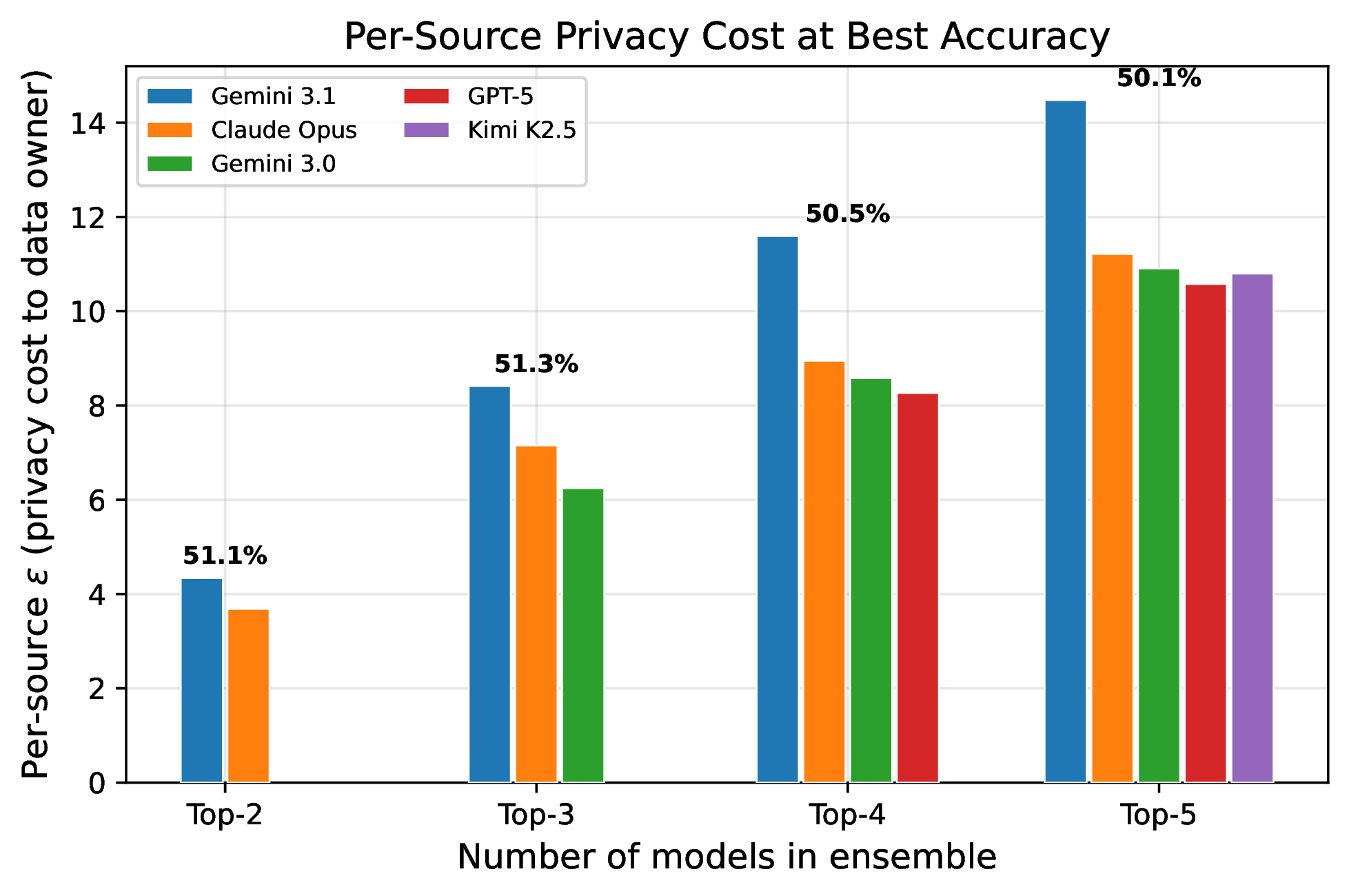

- Attribution (ensure influence): Enforce εe > τmax for specified examples, guaranteeing that certain examples have measurable influence on outputs